The Problem

A major Southeast Asian airline wanted to stop being an airline. Their new ambition was to become a digital platform that happened to operate flights. Chasing this ambition they acquired multiple startups and launched parallel internal initiatives. The result was predictable: competing teams, duplicated capabilities, and a user experience that felt like seven apps duct-taped together.

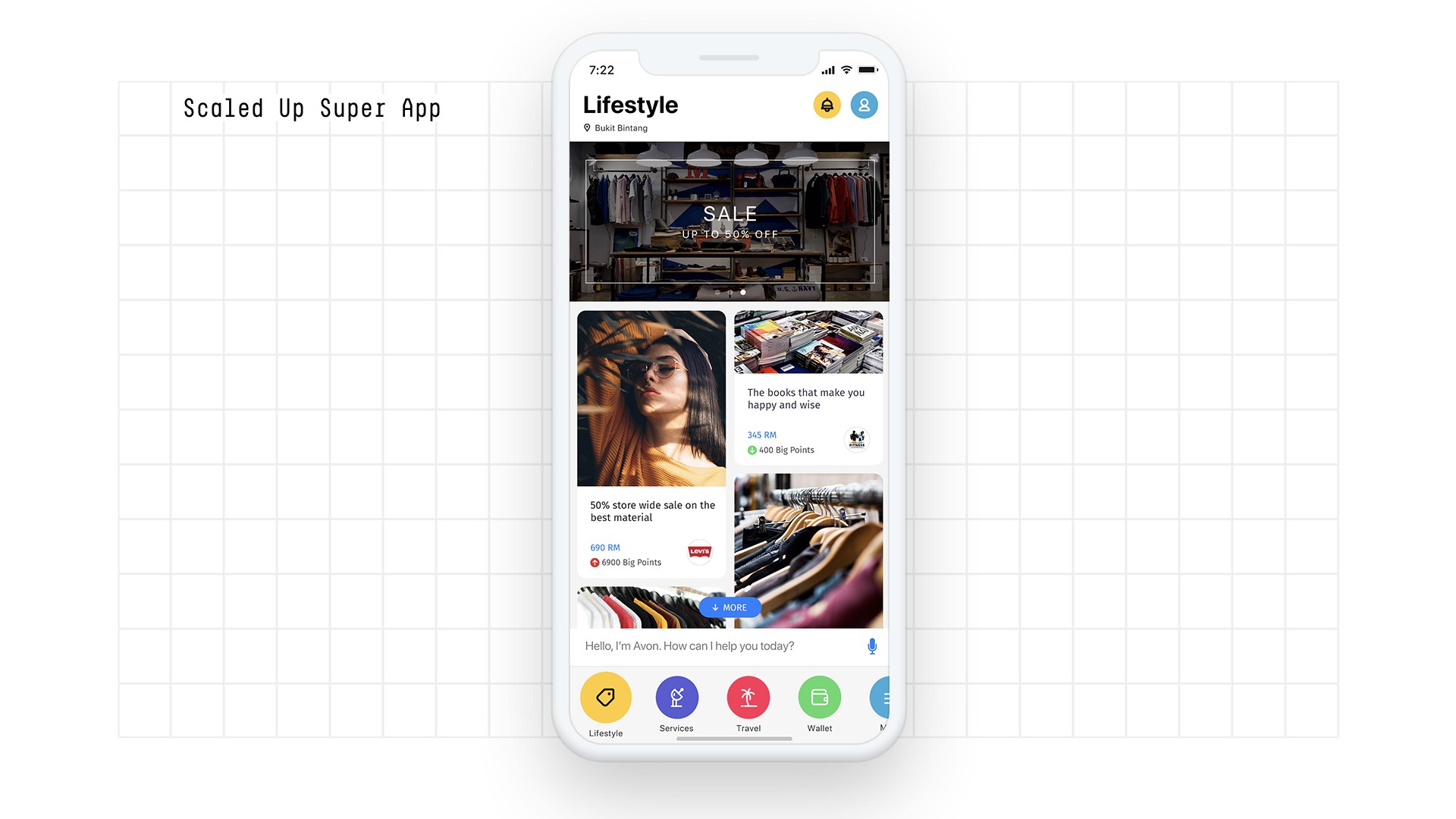

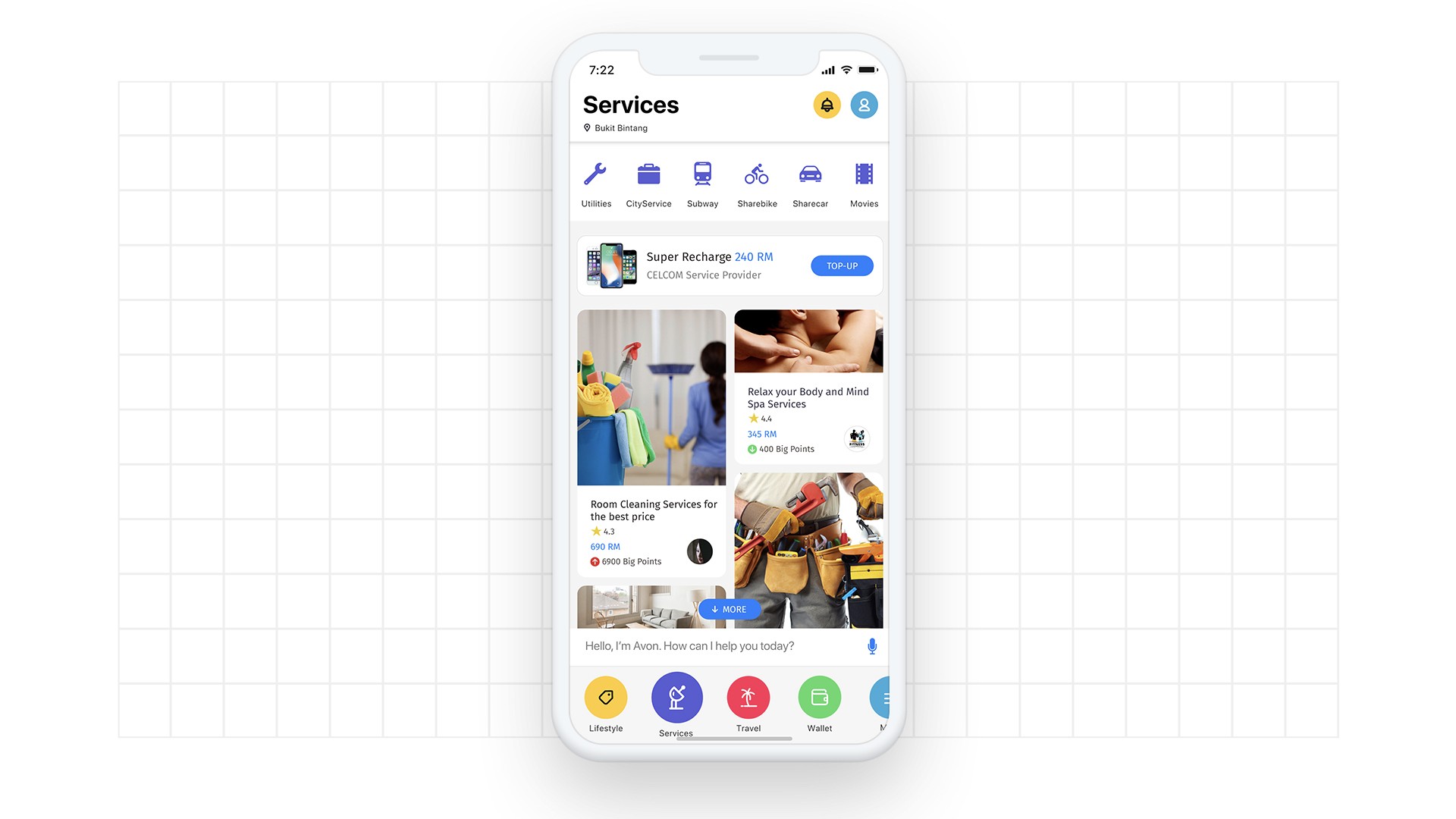

But the deeper problem wasn’t organizational. It was cognitive. Users landing in this expanding ecosystem faced a wall of options — flights, hotels, activities, food, transport, financial products — spread across multiple apps, sometimes with conflicting deals. Decision fatigue was killing engagement. People would open one app, jump to another, and leave in frustration.

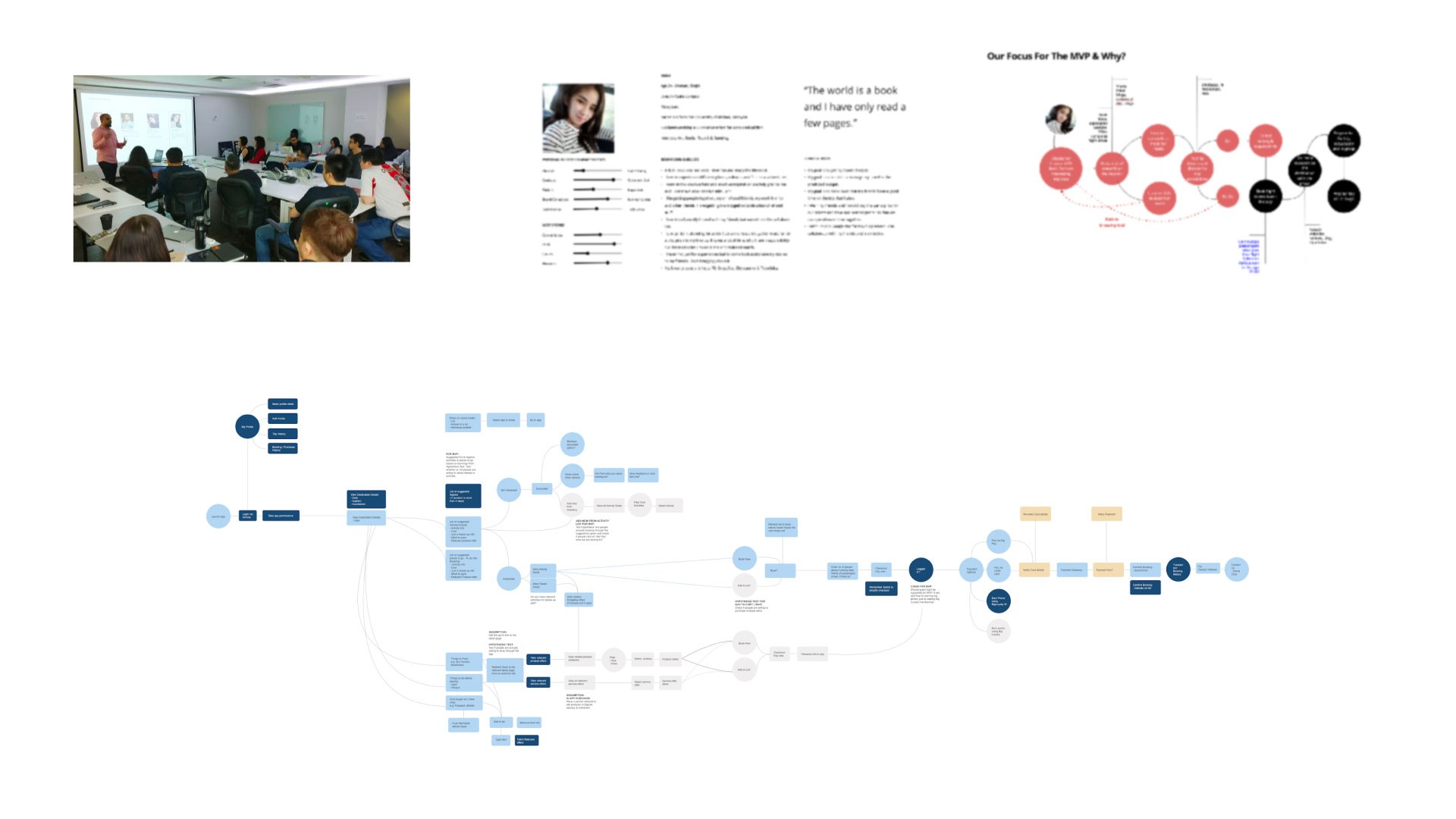

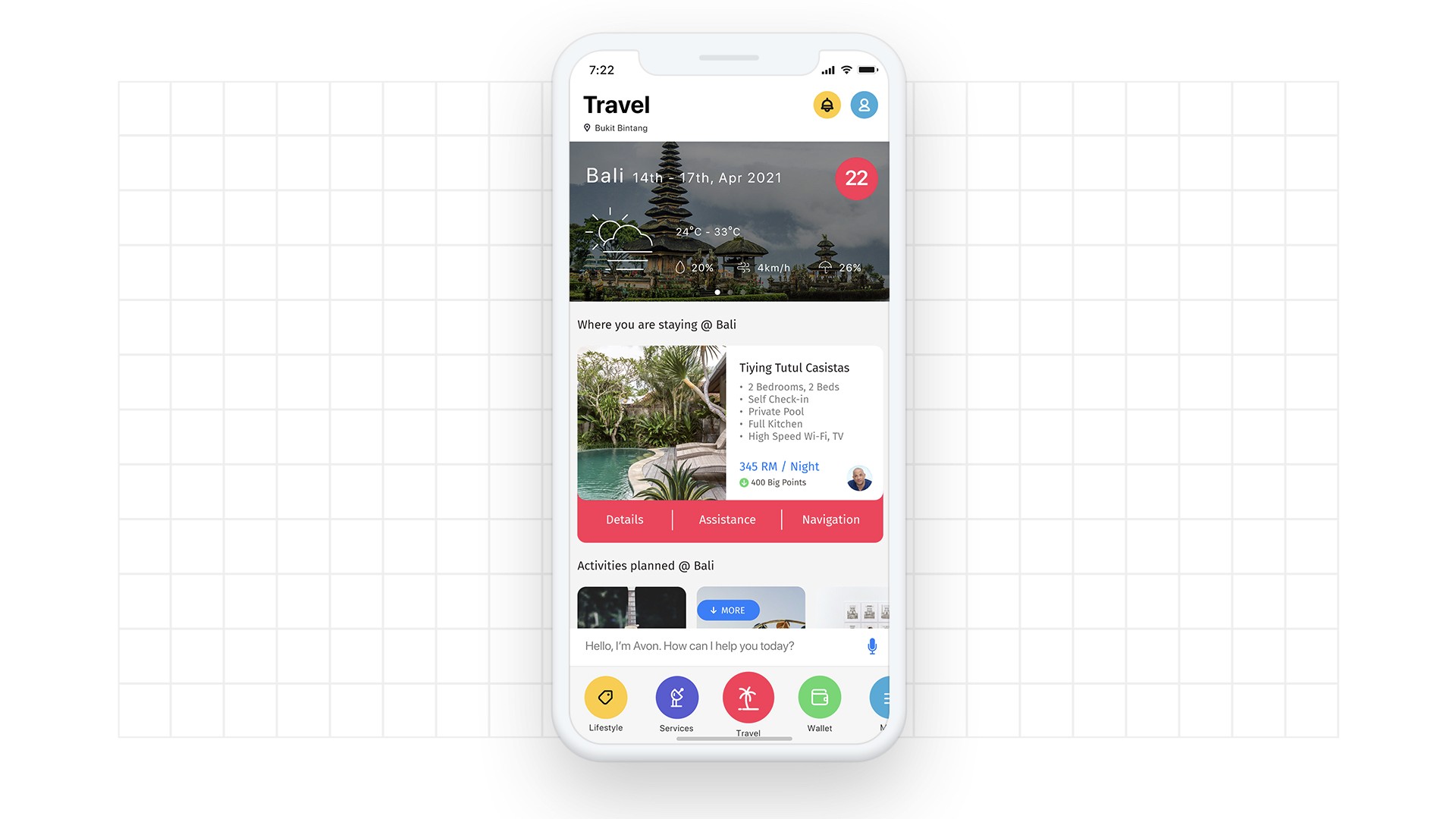

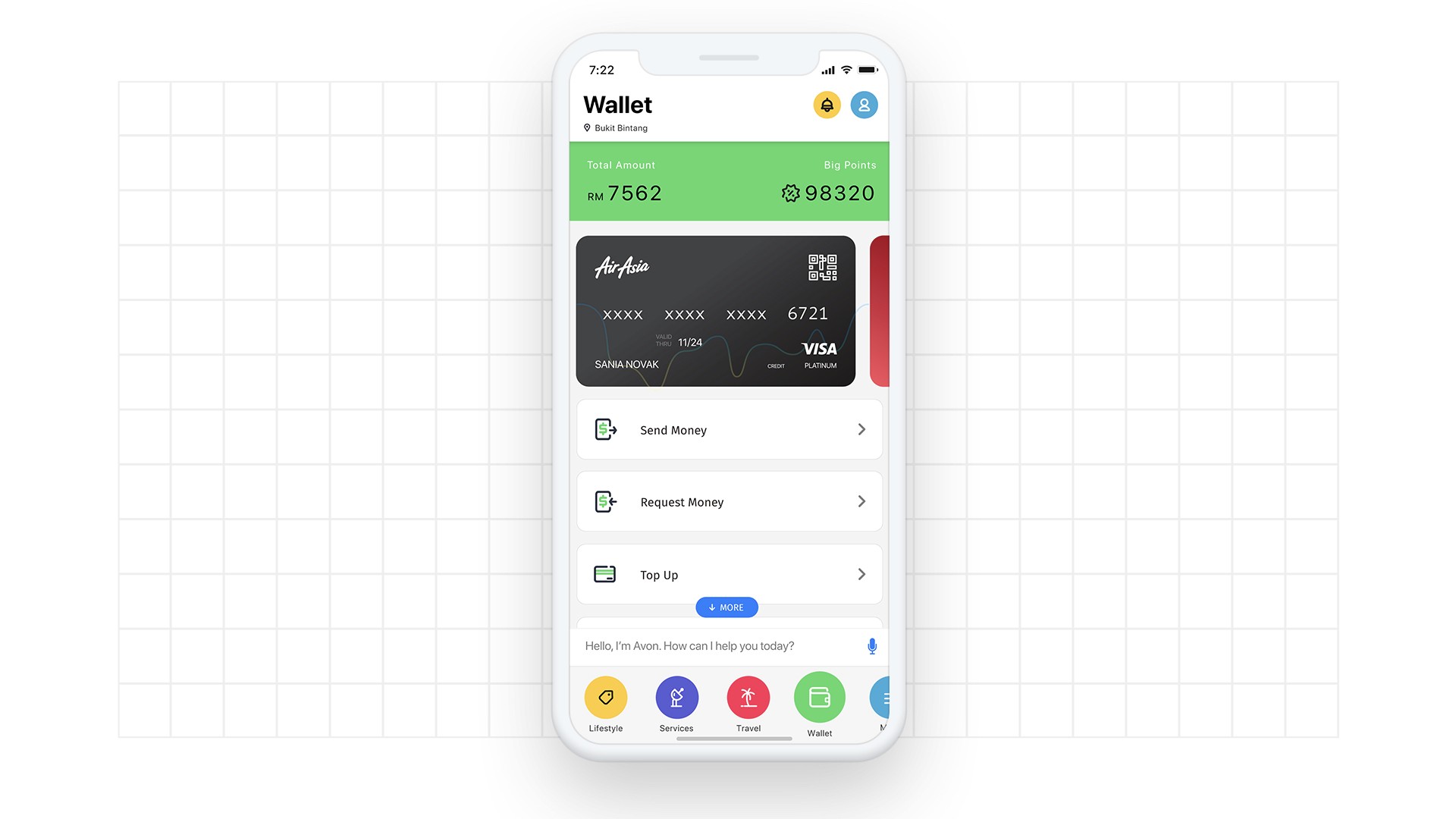

The solution hypothesis was a superapp — travel, lifestyle, payments, and more in a single unified experience. Using jobs-to-be-done to map user workflows, we’d design an experience that anticipated needs and surfaced personalised recommendations at the right moment.

The hardest version of this problem: how do you personalise recommendations when you know nothing about the user? That’s the cold start problem. And for a platform trying to onboard millions of users across dozens of services simultaneously, it wasn’t an edge case. My hypothesis was that solving the cold start problem wasn’t a technical prerequisite — it was the core design challenge that would drive the entire user experience.

The Approach

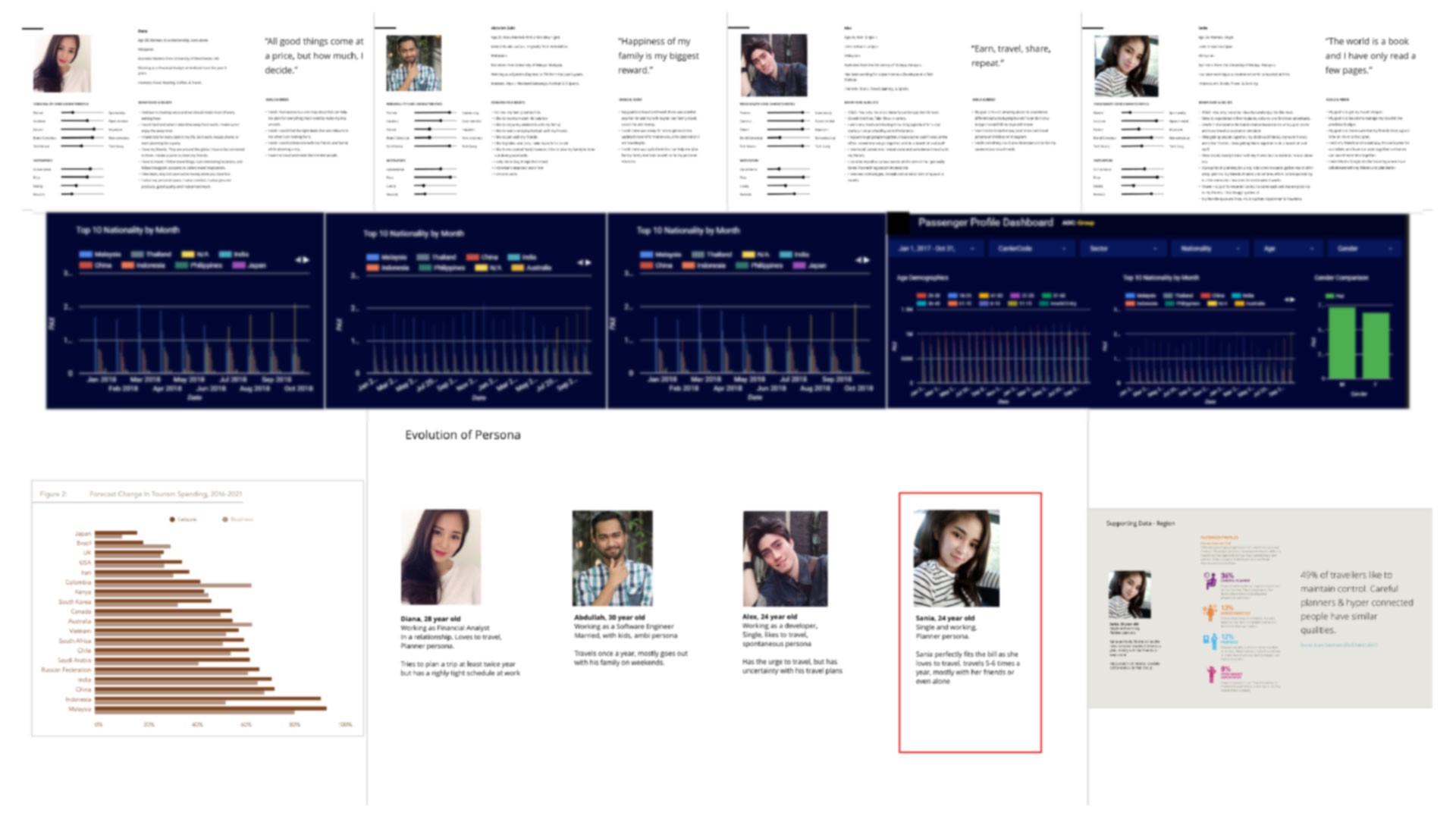

I started with a first-principles question: what does a good recommendation engine actually do? Strip away the ML jargon and you’re left with three variables — how much information is available, how much time the user has to decide, and how valuable the outcome feels. Every AI recommendation system is navigating the tension between these three — what Claude Shannon would recognise as a signal-to-noise problem applied to human preference. Most teams jump straight to collaborative filtering and wonder why it doesn’t work on day one. That’s because they’re optimizing for a steady state that doesn’t exist yet.

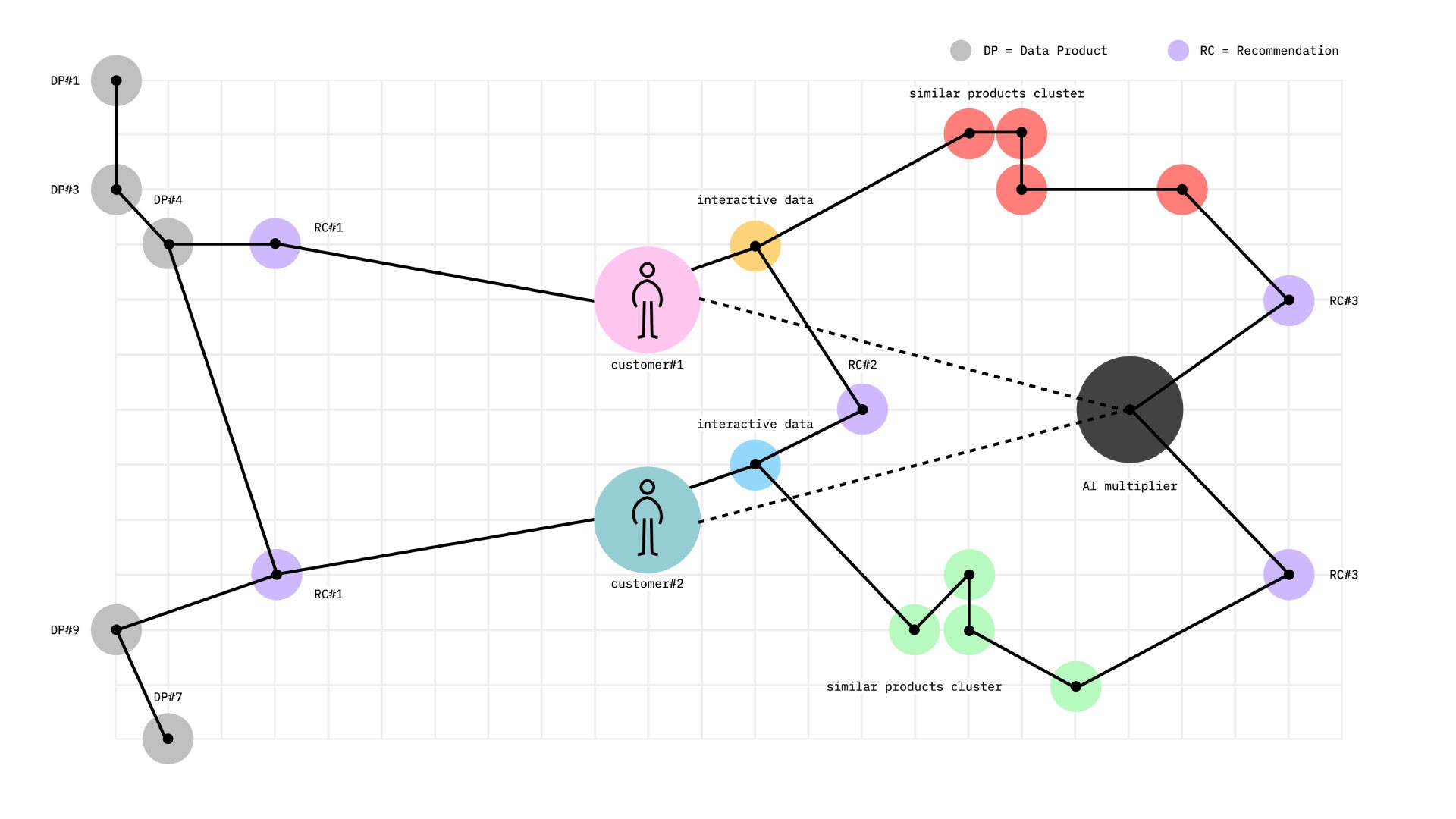

So I mapped out the problem in layers. Not “which algorithm should we use” but “what kind of recommendation is possible at each stage of the user relationship.” A brand-new user with zero history needs a fundamentally different personalization strategy than a user with six months of behavioral data. Treating them the same is the root cause of most recommendation engine failures.

I evaluated five distinct filtering approaches — popularity-based, collaborative filtering, content-based, association rule mining, and hybrid — not as competing options but as layers that activate progressively as data density increases. The key insight: each model has a natural “data threshold” below which the noise overwhelms the signal. Design the system to use each model only where it’s reliable, and you eliminate the cold start problem by definition.

The other critical decision was making data collection part of the product experience rather than a background process. Most teams treat user onboarding and personalization as separate concerns. I designed them as one system — the onboarding IS the data collection, and it needs to feel valuable to the user on its own terms, not like a survey they’re enduring.

What We Built

A three-layer recommendation engine with a gamified data bootstrapping front-end.

The architecture was designed as a flywheel, not a pipeline. Each user interaction — even a bad one — fed signal back into the system, making it more accurate for the next user. The system got smarter from use, which meant growth itself was the optimization mechanism. More data fed better AI recommendations, which drove more engagement, which generated more data. The system gained from scale stress — every wave of new users made it stronger, not weaker.

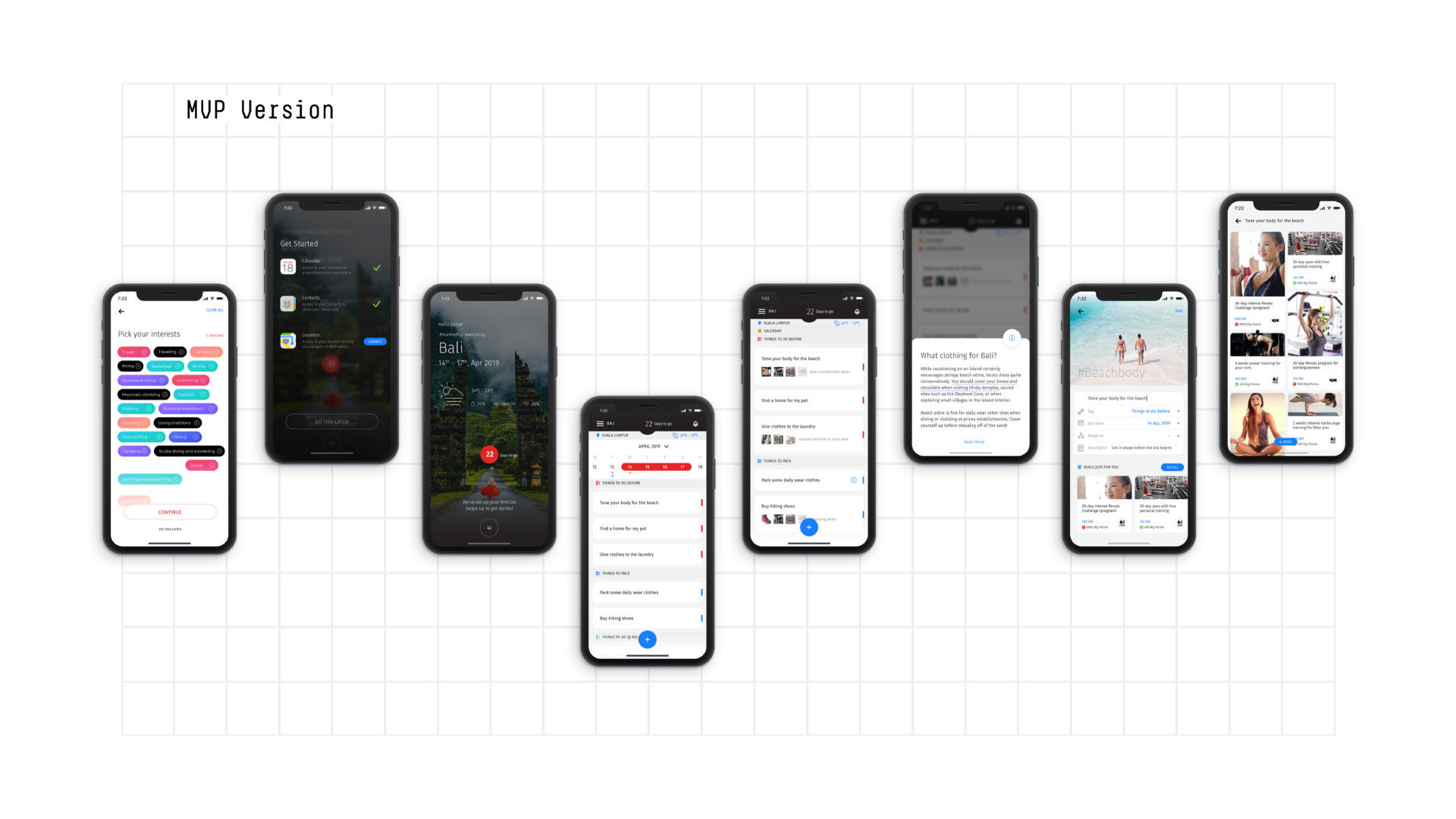

Layer 1 (Cold Start Solver): Interactive onboarding where users selected interests through visual, game-like bubbles — not a boring preference form. This captured explicit preferences while implicit signals (location, device, calendar context) filled gaps. The combination gave us enough signal to generate meaningful recommendations from the first session. This was the cold start killer: make the zero-data state impossible by designing the product to collect data as a natural part of first use.

Layer 2 (Growing Intelligence): As usage data accumulated, collaborative and content filtering models kicked in. Similar users informed recommendations. Similar items expanded discovery. Association rule mining added session-context awareness — what patterns of behavior within a single visit predicted intent.

Layer 3 (Deep Personalization): Machine learning models trained on historical and real-time behavioral data generated increasingly precise AI recommendations. The system moved from reactive (“you looked at flights to Bali, here are Bali hotels”) to proactive (“based on your patterns, you’re likely planning a trip in three weeks — here’s a curated package”). We built in a deliberate serendipity factor — 10-15% of recommendations were intentional surprises to prevent the filter bubble problem and build the kind of delight that drives organic sharing.

The 10-15% serendipity factor was a game theory move — and later, I connected a dot with a principle Kenneth Stanley and Joel Lehman articulate brilliantly in Why Greatness Cannot Be Planned: the most interesting discoveries come from exploration, not optimization toward a fixed objective. Without it, the system converges on a local optimum: users get exactly what they want, stop exploring, and the recommendation surface shrinks. The serendipity factor forces exploration, which generates new signal, which expands the recommendation surface. It’s the explore/exploit tradeoff — trading a small amount of short-term conversion for long-term ecosystem health. A recommendation engine that only confirms what it already knows about you is a recommendation engine that’s dying.

The UI treated the entire journey as a single conversation. Onboarding flowed into browsing which flowed into personalized discovery, with no hard boundaries between “setup” and “using the app.”

What We Deliberately Didn’t Build

Not every decision is about what you add. Some of the highest-leverage calls were about what we left out.

- Didn’t start with machine learning. Layer 1 was entirely rules-based. ML only kicks in when you have enough data to justify it. Deploying ML on thin data doesn’t give you AI recommendations — it serves you useless false positives.

- Didn’t build a single recommendation algorithm. Built a progression. Each model has a data threshold below which it produces garbage. One-size-fits-all is a recipe for mediocre recommendations across the board.

- Didn’t treat onboarding and personalization as separate systems. Merged them. The onboarding IS the data collection. Separating them means you’re asking users to do work now for value later — and users don’t do that.

- Didn’t try to eliminate decision fatigue by reducing options. A superapp with fewer options defeats its own purpose. Instead, we surfaced the RIGHT options at the right time. The volume stayed. The overwhelm disappeared.

Results

- 35% projected conversion rate improvement through contextually relevant, timely suggestions

- Measurably higher engagement rates driven by reduced decision fatigue and personalisation that felt helpful rather than intrusive

- Cold start problem effectively eliminated — users received meaningful AI recommendations from their first session

- Higher app retention rates through gamified engagement loops and real-time responsiveness

- Revenue model validated — benchmarked against Amazon’s recommendation engine, which drives 35% of total revenue through similar hybrid approaches

Where the Model Breaks

The 3-layer architecture isn’t universal. It breaks when user behavior is genuinely random — no patterns to learn from, no segments to cluster. It also degrades when segments are too small for collaborative filtering to reach statistical significance. If your user base fragments into micro-niches before Layer 2 has enough data to find meaningful overlaps, the system stalls between rules-based and intelligent. Know your minimum viable segment size before committing to Layer 2.

What I’d Do Differently Today

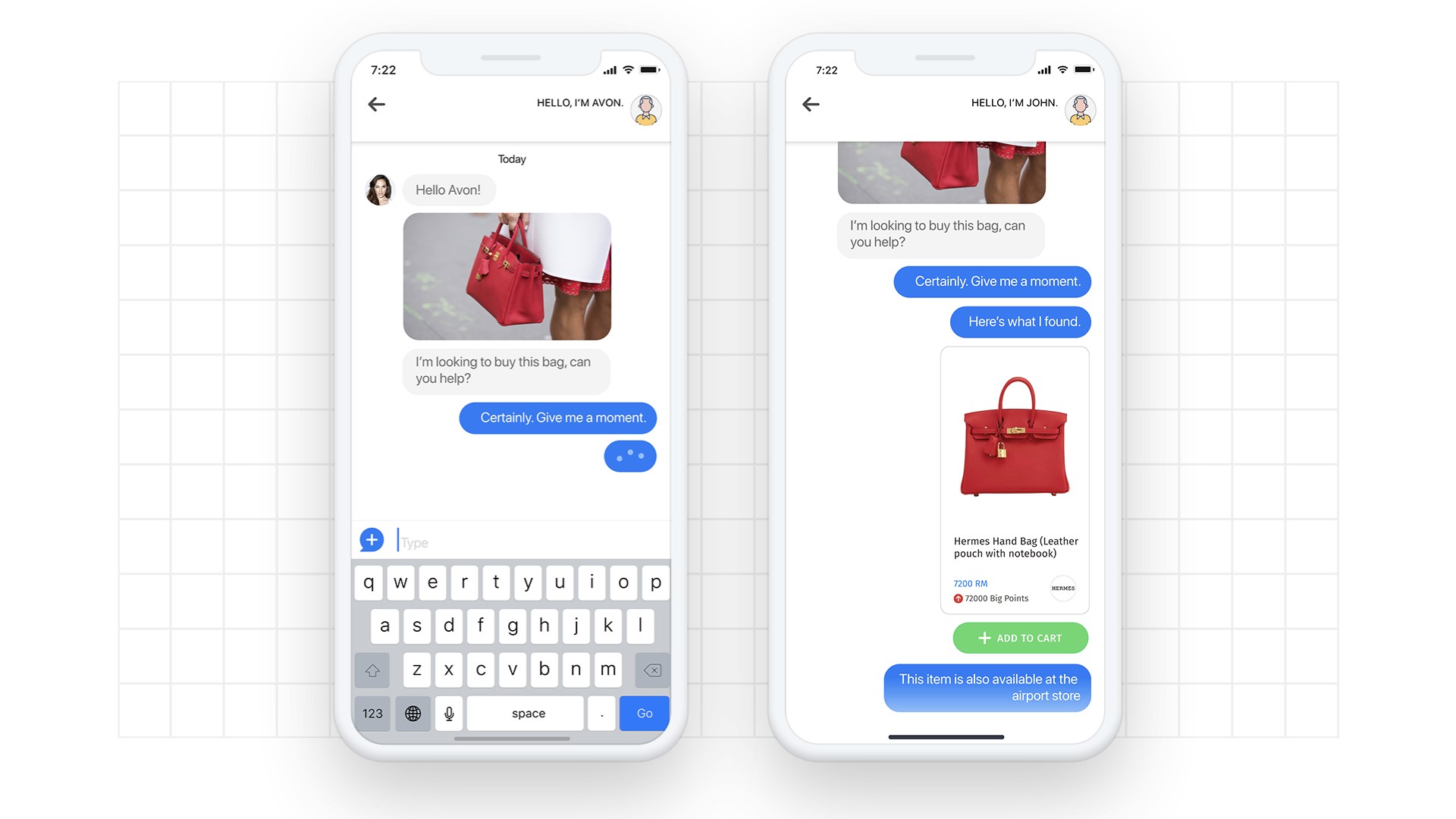

The 2019 version was state-of-the-art for its time, but the landscape has shifted dramatically. Today, I’d replace the explicit preference capture in Layer 1 with an LLM-powered conversational interface. Instead of selecting interest bubbles, users would have a brief natural language conversation: “I’m planning a family trip to Southeast Asia in April, we like beaches but my kids need activities.” One exchange like that gives you more signal than twenty bubble selections, and the experience feels like talking to a knowledgeable friend rather than configuring a machine.

The three-layer architecture remains sound — that’s a structural principle, not a technology choice. But the implementation of each layer would lean heavily on embeddings and transformer-based models — the architecture described in Vaswani et al.’s Attention Is All You Need — rather than traditional collaborative filtering. And the serendipity factor I designed manually could now be driven by generative AI that understands context well enough to surprise meaningfully rather than randomly.

Compounding Patterns

The core insight — that personalization starts at onboarding, not after — resurfaced in my work on invisible banking. Same principle, different domain: design the first-touch experience to capture enough signal that every subsequent interaction can be intelligent. I wrote about this pattern in depth in my piece on designing intelligent recommendation engines. The pattern scales from travel super-apps to financial services. When you see the same product thinking work across unrelated industries, it stops being a technique and starts being a principle.

Key Takeaway

The cold start problem isn’t a data problem — it’s a design problem. If your product can’t generate useful AI recommendations without existing data, you haven’t designed the user onboarding experience properly. Make data collection inseparable from value delivery, and cold start stops being a problem.

FAQ

How do you solve the cold start problem in AI recommendation engines?

Design the onboarding experience to double as data collection. Combine explicit user input (preferences, interests) with implicit signals (location, device, time context) to generate meaningful AI recommendations from the first session. The key is making data capture feel valuable to the user, not extractive. This personalization strategy eliminates the zero-data state by design.

What is a hybrid recommendation system and when should you use one?

A hybrid recommendation system layers multiple filtering approaches — popularity, collaborative filtering, content-based, and association rules — activating each as data density supports it. Use this approach when you need to serve users across a wide spectrum of data availability, from brand-new to deeply engaged.

How do superapp recommendation engines differ from single-product ones?

Superapps face a combinatorial explosion of recommendation contexts — flights, hotels, food, payments, activities — each with different decision frameworks and time horizons. The recommendation engine needs to understand cross-domain intent (flight search implies hotel need) and manage cognitive load across categories, not just within them. Superapp personalization requires thinking in systems, not features.