The Problem

A German manufacturing leader had spent decades building engineering excellence. They also had data — mountains of it. But having data and being data-driven are not the same thing. Not even close.

Leadership used the phrase “data-driven” in every strategy deck. It echoed through town halls and quarterly reviews. But in the corridors where actual work happened, the picture was different. Teams operated in silos. Each department hoarded its own datasets, built its own dashboards, interpreted numbers through its own lens. There was no shared language around data, no unified organizational data strategy, and no mechanism to turn raw information into organizational intelligence. They were data-rich and insight-poor — a giant with extraordinary muscles and no coordination.

Data sharing in siloed organizations is a prisoner’s dilemma. Each department hoards data because sharing feels like losing power — if I give you my data, you might make better decisions than me. Mandating data sharing doesn’t resolve this. It creates resentment and compliance theater. The real question isn’t “how do we force people to share data?” It’s “how do we make the cost of NOT sharing impossible to ignore?”

The deeper problem wasn’t technical — it was systemic, in the way Donella Meadows means in Thinking in Systems: the structure of the system was producing the behaviour. Yes, the data was often the wrong data, poorly structured, collected without clear intent. But the real blockers were cultural. Business strategy and data strategy were misaligned. There was no experimentation culture. Teams lacked the capability — and often the confidence — to interpret data and act on it. The gap between “we have data” and “we make decisions with data” was a canyon, and no amount of tooling was going to bridge it alone.

The Approach

I developed the Data Trinity directly from a hard lesson. A year earlier, I’d pitched a technically ambitious digital twin for pharmaceutical R&D. Stakeholders loved the vision. Data governance killed the funding. That failure taught me something I couldn’t unlearn: data maturity is the prerequisite, not the outcome. You cannot build intelligent products on top of organizational data chaos. The Data Trinity framework is, in many ways, the answer to the question that pharmaceutical project forced me to ask — what does an organization actually need to get right before any data-driven ambition becomes achievable?

I started where I always start: diagnosis before prescription. Before designing any solution, we needed to understand the actual terrain — not what leadership believed the organization looked like, but what the data practices actually were at every level. This is the foundation of any honest data maturity assessment.

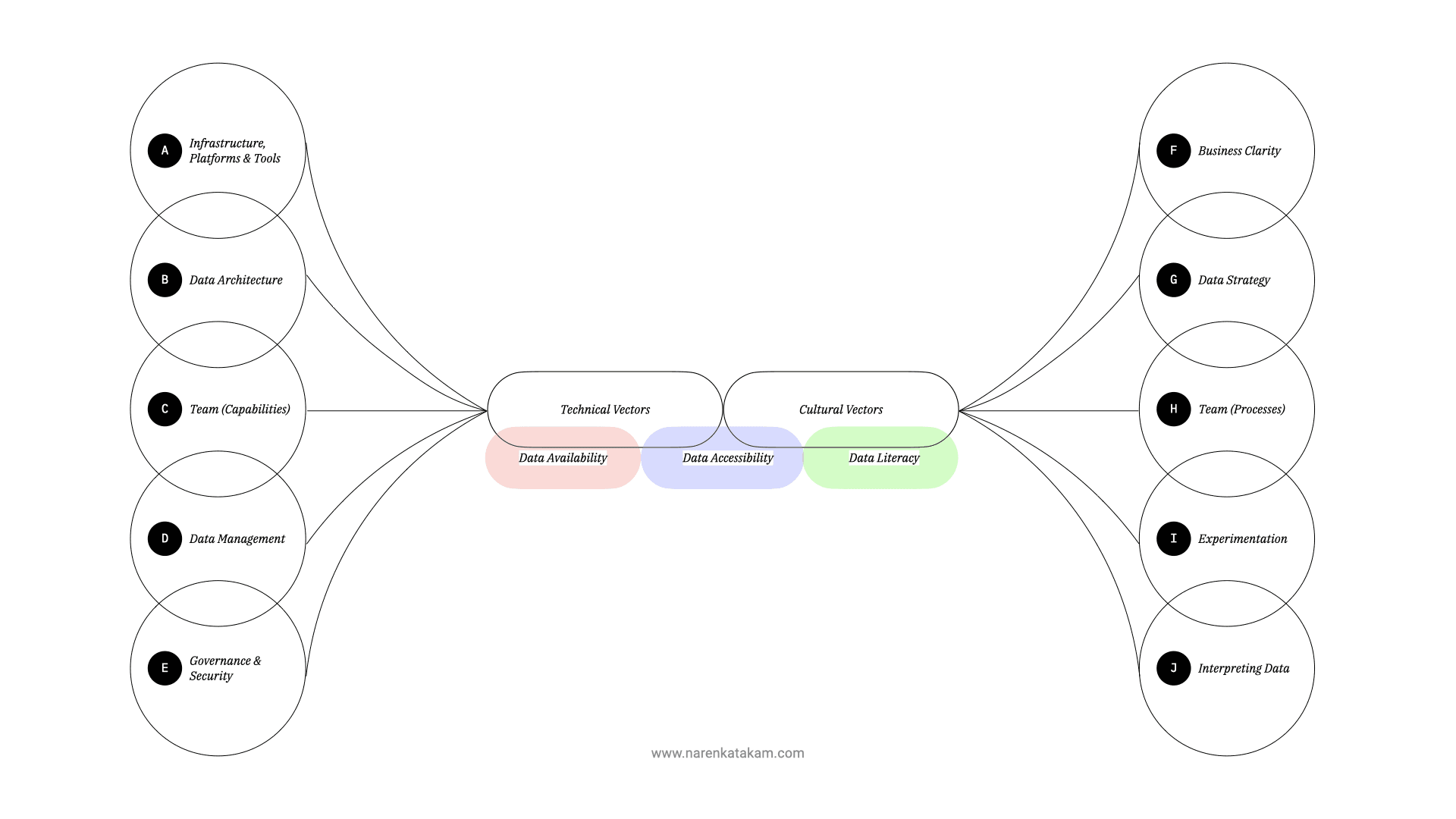

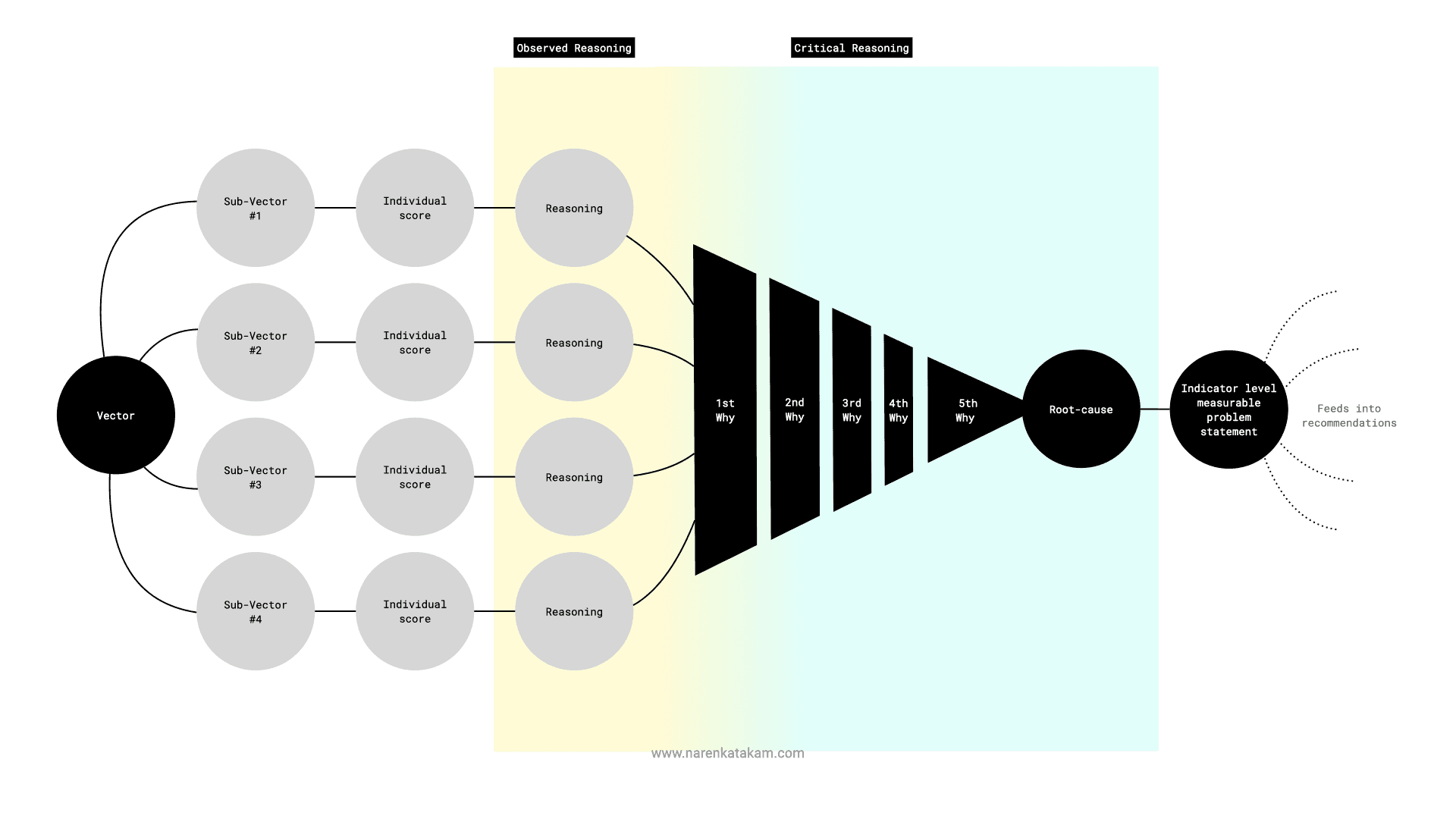

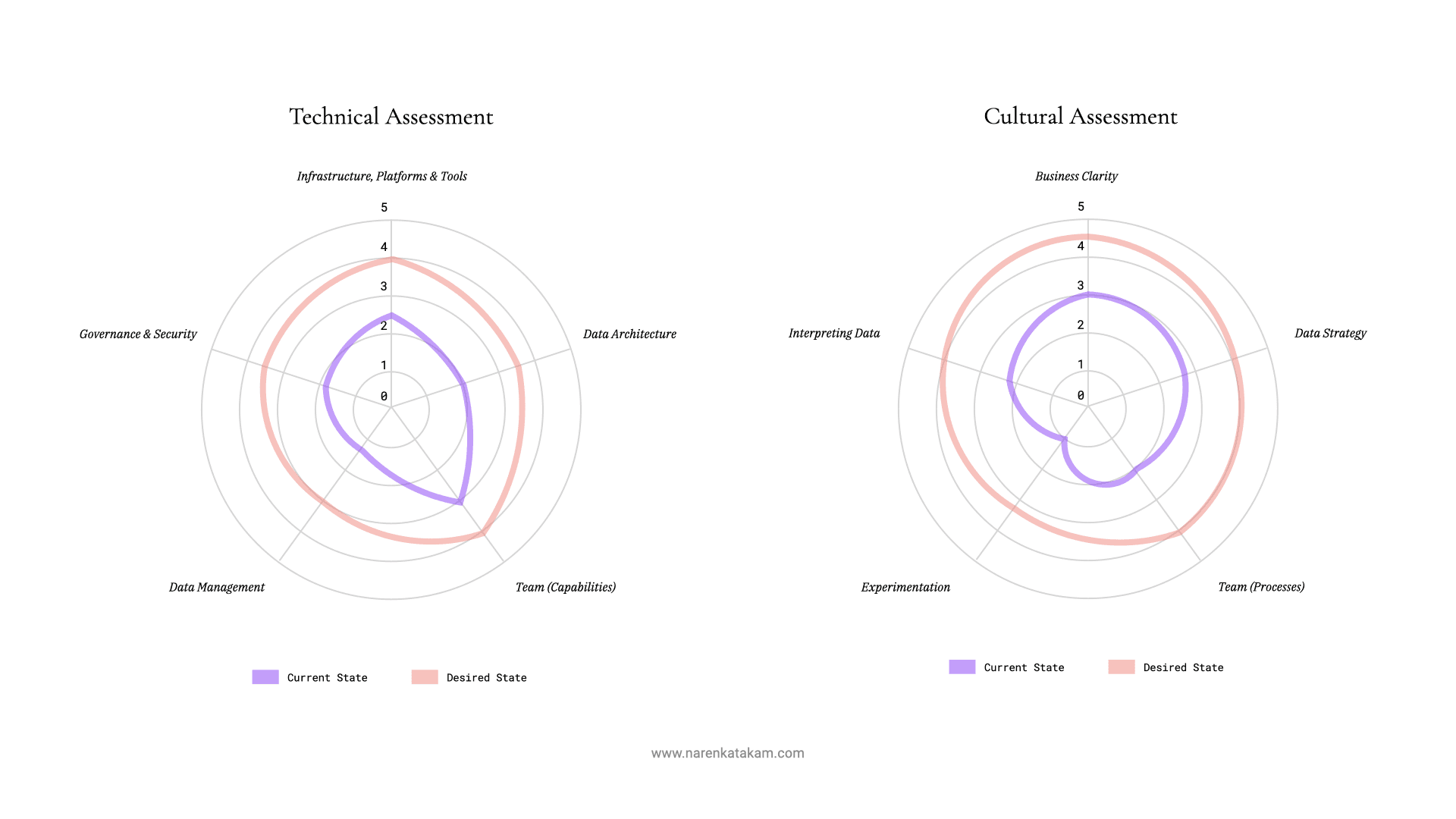

We built a structured assessment across ten vectors, split into two categories. Five technical vectors: infrastructure and tooling, data architecture, team capabilities, data management, and data governance and security. Five cultural vectors: clarity of business strategy, integration of data strategy, team processes, experimentation culture, and capability in interpreting data. Each sub-vector was scored individually, creating a granular heat map of where the organization actually stood versus where it needed to be.

The methodology mattered as much as the findings. We used root-cause analysis — the “5 Whys” technique applied systematically — to cut through surface symptoms. Stakeholder interviews, cross-functional workshops, radar diagrams mapping current state against target state. The goal was not a consultant’s report that sits on a shelf. It was a shared, evidence-based understanding that every stakeholder could see themselves in.

This is where the prisoner’s dilemma resolved itself. The Data Trinity assessment doesn’t force data sharing — it makes the COST of not sharing visible through a shared diagnostic. When every team can see the same radar chart showing exactly where the organization bleeds value, hoarding becomes indefensible. Nobody mandated collaboration. The assessment made non-collaboration look like what it was: organizational self-harm. When people can point at the same radar chart and say “that gap is why we can’t move,” you’ve already started the data transformation.

What the assessment revealed was a pattern I’ve seen across industries: the organization was trying to solve a systems problem with point solutions. A new dashboard here, an enterprise data platform there, a data literacy training program that nobody attended. The pieces existed, but there was no coherent frame connecting them. That diagnosis is what led to the Data Trinity.

What We Built

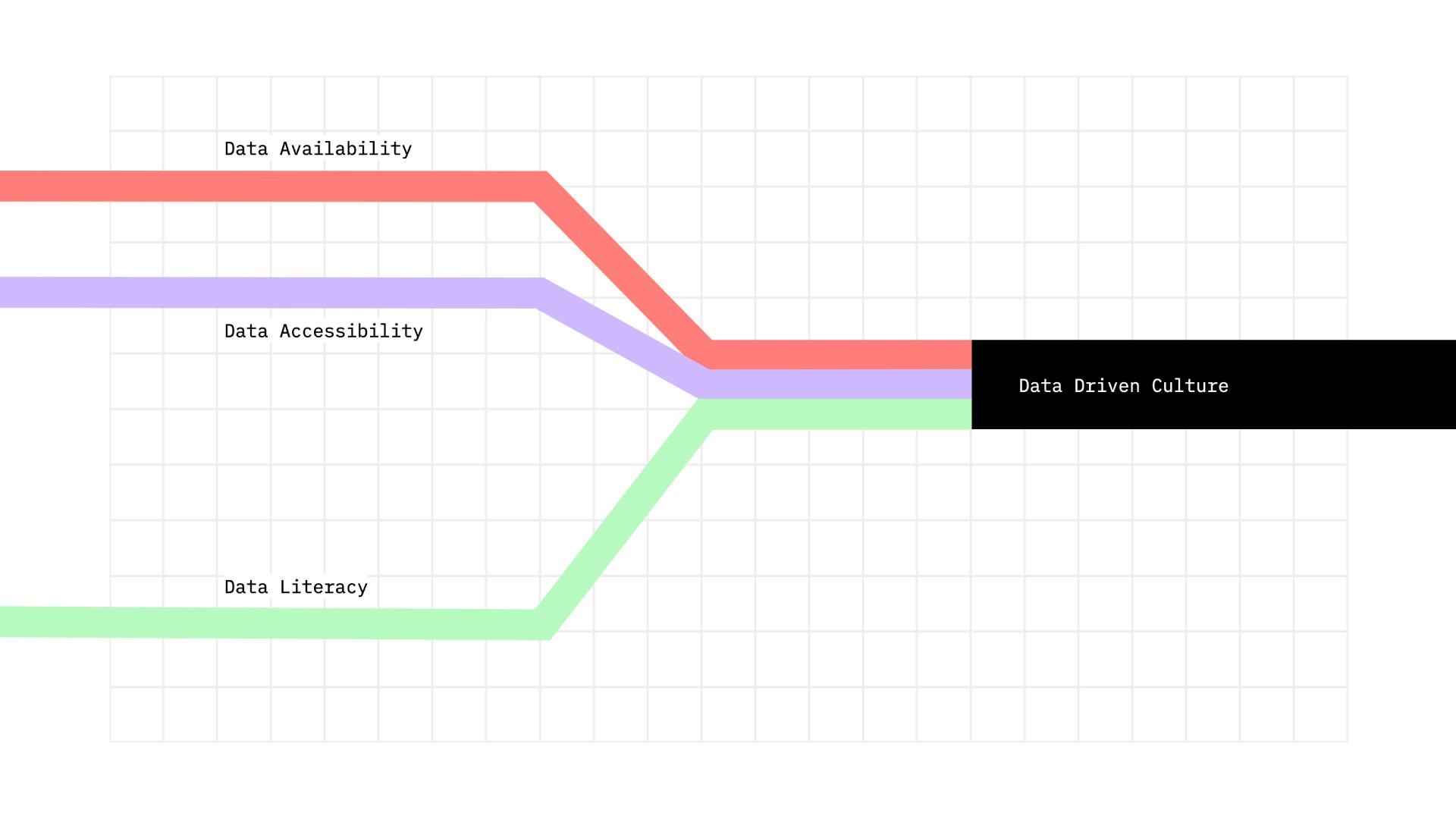

The Data Trinity is a framework I developed to give organizations a simple, honest answer to the question: what does it actually take to become data-driven? It distills the problem into three pillars, each necessary, none sufficient alone.

Data Availability — does the organization capture the right data, tied to clearly defined business problems? This is where product thinking meets organizational data strategy. Claude Shannon’s information theory offers the right mental model here: information is only meaningful relative to a question. You don’t start with what data you have. You start with what decisions you need to make, and work backwards to what data those decisions require. Most organizations get this backwards, collecting everything and hoping insight emerges.

Data Accessibility — can people across the organization actually find, understand, and use the data that exists? This is the platform problem — building infrastructure where data assets are discoverable, reusable, and governed. Not a data swamp that only the engineering team can navigate. The balance between observability, reusability, and discoverability is where most enterprise data platforms fail.

Data Literacy — do people have both the skills and the mindset to work with data? This is the hardest pillar, because it’s a culture change, not a training program. We adapted a product adoption curve model specifically for data consumption, which let us identify where different teams and roles sat on the adoption spectrum and design targeted interventions instead of one-size-fits-all workshops. A real data literacy framework meets people where they are — it doesn’t pretend everyone needs the same skills.

Each pillar maps to the full data lifecycle — from generation and collection through cleaning, exploration, and consumption. We built a data maturity assessment scale alongside the framework, allowing the organization to benchmark where they stood on each pillar and track progress over time. This wasn’t a one-shot consulting engagement. It was a data transformation roadmap designed for continuous, measurable improvement.

There’s a property of the Data Trinity that only became clear to me after deploying it: the framework is antifragile, in Taleb’s sense (Antifragile). Every organizational crisis that reveals data gaps — a failed product launch traced back to misread market data, a compliance near-miss from ungoverned datasets, a strategic decision made on stale numbers — doesn’t weaken the framework. It strengthens it. Each failure feeds the diagnosis. Each data-related stumble makes the radar chart more precise and the case for change more undeniable. Unlike specific tools that deprecate or platforms that age out, the Data Trinity gets more relevant as problems compound. The worse your data situation, the more the framework has to work with.

Results

- Enhanced data collection processes aligned to actual business questions, producing richer and more actionable datasets

- Measurable increase in cross-departmental data usage and platform adoption rates

- Improved data literacy scores across roles and seniority levels, validated through internal assessments

- Faster decision-making cycles through clearer data governance and access patterns

- A shared vocabulary around data strategy that broke down silos between technical and non-technical teams — arguably the single most valuable outcome

- A repeatable data maturity assessment that gave leadership a real dashboard of transformation progress, not gut feel

As an external strategist, I built the diagnostic framework, ran the assessment, and designed the transformation roadmap. The organization owns the multi-year execution. My intellectual contribution — the Data Trinity framework itself — carries my name. If it doesn’t work, that’s on the framework, not just the implementation. That’s the only honest relationship between a strategist and their ideas: skin in the game.

What We Deliberately Didn’t Build

The most important decisions in this engagement were what we SUBTRACTED from the typical data transformation playbook.

Didn’t build another data lake. The organization had enough infrastructure — it lacked shared understanding. Adding another enterprise data platform to a landscape already cluttered with underused platforms would have been malpractice. The problem was never storage. It was meaning.

Didn’t create more dashboards. Dashboards were part of the problem, not the solution. Every silo had its own beautiful visualizations that told its own story. More dashboards would have meant decorating data silos with better graphics — the organizational equivalent of rearranging deck chairs.

Didn’t run a generic data literacy training program. One-size-fits-all training is organizational theater. It checks a box and changes nothing. We designed targeted interventions based on where each team actually sat on the adoption curve, not a corporate-mandated curriculum.

Didn’t start with technology selection. We removed the tooling-first mindset entirely. No RFPs for analytics platforms. No vendor evaluations. No architecture diagrams. Not until the organization understood what problem it was actually solving. Data governance, data strategy alignment, and cultural readiness came first — tools came last.

Via negativa. The framework’s power is as much in what it excludes as what it includes.

What I’d Do Differently Today

The biggest shift since 2022 is what AI makes possible on the Accessibility and Literacy pillars.

On accessibility — and this connects to what I wrote about in From Chaos to Clarity: Crafting a Comprehensive AI Strategy — LLM-powered data discovery would change the game entirely. Instead of building elaborate metadata catalogs and hoping people search them, you build a conversational interface where anyone can ask “show me last quarter’s production defect rates by plant” in plain language and get an answer. The platform becomes a dialogue, not a catalog. That alone would have accelerated adoption by months.

On literacy: the skills gap that took dedicated data literacy framework programs to close is now partially bridged by AI assistants that can help people query, interpret, and visualize data without needing SQL skills or statistical training. The literacy bar doesn’t disappear — you still need people who understand what questions to ask and whether the answers make sense — but the mechanical barrier drops dramatically. I’d redesign the literacy pillar today around critical thinking and question-framing rather than tool proficiency.

The Data Trinity framework itself still holds. Availability, Accessibility, Literacy — the three pillars remain the right decomposition. But the solutions within each pillar would look substantially different with today’s AI capabilities.

Key Takeaway

Data transformation is culture transformation. The organizations that become genuinely data-driven are not the ones with the best data infrastructure — they’re the ones that build a shared language, a shared diagnostic, and a framework simple enough that every stakeholder from the boardroom to the factory floor can point at the same map and say “here’s where we are, here’s where we’re going.”

The Data Trinity has a clear failure mode: cosmetic adoption. When leadership uses “data-driven” as a buzzword without genuine commitment to changing how decisions get made, the framework becomes organizational theater — a data maturity assessment that nobody acts on, a radar chart that decorates a quarterly deck. The framework requires executive skin in the game to work. Without it, you get the vocabulary of a data-driven culture without the substance. I’d rather an organization honestly say “we’re not ready” than perform readiness. The assessment is designed to make that distinction sharp and uncomfortable.

FAQ

What is the Data Trinity framework?

The Data Trinity is an original framework for assessing and transforming organizational data maturity. It breaks the problem of “becoming data-driven” into three interdependent pillars: Data Availability (capturing the right data for the right business problems), Data Accessibility (making data discoverable, reusable, and governed across an enterprise data platform), and Data Literacy (building both the skills and the mindset for data-driven decision-making). Each pillar maps to the full data lifecycle and includes a data maturity assessment scale for tracking progress. The framework addresses both technical infrastructure and cultural foundations — because data transformation requires both.

Why do most data transformation initiatives fail?

Most fail because they treat it as a technology problem. Organizations invest in data lakes, dashboards, and analytics platforms — the technical infrastructure — without addressing the cultural foundations: unclear business strategy alignment, siloed teams, no experimentation culture, and insufficient data interpretation capability. The Data Trinity framework addresses both technical and cultural vectors through its ten-vector diagnostic because you cannot solve one without the other. Effective data governance requires cultural change, not just policy documents.

How long does a data-driven culture transformation take?

There is no honest answer shorter than “it depends,” but the realistic range for meaningful, measurable change is 12 to 24 months. The framework and assessment can be built in weeks. Quick wins — like improving data accessibility for a single high-value use case — can land within a quarter. But genuine cultural shifts in how an organization makes decisions require sustained effort, executive sponsorship, and a measurement system that keeps the transformation honest. The data maturity assessment is designed precisely for this: making progress visible and regressions undeniable.