The Problem

A global insurance company had some of the best developers in the industry. They were spending a third of their time on work that had nothing to do with building software. Debugging. Manual code reviews. Dependency resolution. Compliance checks. Provisioning environments. Waiting for other teams to unblock them.

This wasn’t a tooling gap. It was a systems failure. Hundreds of developers across teams and geographies, all working with fragmented internal developer platform infrastructure that lacked integration, contextual intelligence, and basic usability. The result: siloed operations, invisible bottlenecks, and leadership flying blind on developer productivity. In a regulated industry where speed, accuracy, and compliance are non-negotiable, the inability to ship fast was becoming a business risk.

Developer platforms face a classic coordination game: no individual developer will adopt a new platform unless enough others do too. So a developer productivity platform that requires wholesale adoption to deliver value is dead on arrival. That insight shaped everything that followed.

The real question wasn’t “how do we make developers more productive?” — a question I explore in The AI Productivity Trap recently. It was: what would it look like if we treated developer experience as a product thinking problem — and designed a platform from first principles?

The Approach

Most platform teams start with a tool wish-list. We started with the developer. Jobs-to-be-Done interviews across functions, seniorities, and geographies. We mapped the entire developer journey — onboarding, project setup, coding, review, testing, deployment, observability — and tracked where time and energy leaked. The patterns were consistent: long feedback loops, unclear ownership, inconsistent CI/CD pipelines, redundant debugging, and compliance audits that felt like punishment.

Then we did the gap analysis. Not “what tools do we have vs. want” — but “what is the actual developer experience today vs. what would it need to be for developers to spend 90% of their time on high-value work?” The gap was architectural, not incremental. You couldn’t fix this with better Jira boards or another Slack integration. The platform itself needed reimagining.

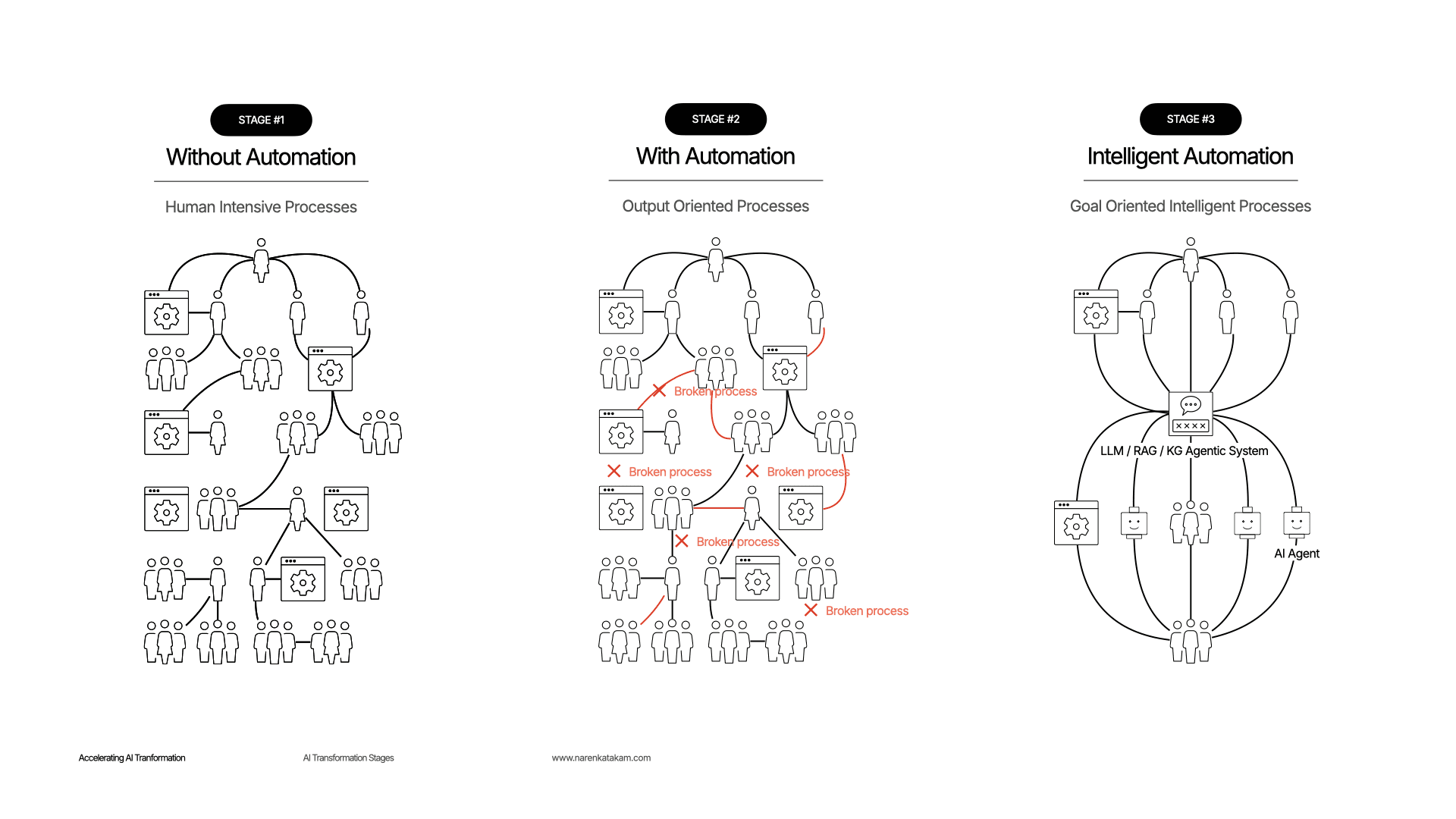

The key insight was that developer experience is a multi-layered system — something that echoes the layered, self-referencing structures Douglas Hofstadter explores in Gödel, Escher, Bach: each layer only makes sense in the context of the others, and the system’s intelligence emerges from their interaction, not from any single layer. Think of it like a building: the foundation (security, compliance) has to hold everything up. The infrastructure (self-serve, observability) has to just work. The living spaces (AI agents, knowledge, data products) have to be where developers actually spend their time. You can’t bolt AI onto a broken foundation and call it intelligent. So we proposed and designed nine layers, each with a clear responsibility, all API-first, all composable. The 9-layer architecture became the backbone of the new platform engineering vision.

A critical design principle: each layer had to be independently useful and independently fallible. If the testing plane goes down, the observability plane still works. If AI agents are temporarily unavailable, the self-serve control plane still provisions environments. No single layer failure cascades into a platform-wide outage. This wasn’t just reliability engineering — it was the architectural bet that solved the coordination game. Developers could adopt one layer at a time, get immediate value, and organically discover the others. No big-bang rollout required. That’s what turned a coordination problem into a compounding advantage.

Getting cross-functional buy-in was critical. We aligned engineering, product, compliance, and executives around four non-negotiable principles: AI-first architecture (AI is foundational, not an add-on), scalable and modular design (works for 10 or 10,000 developers), self-serve empowerment (autonomy without compromising governance), and secure-by-design (compliance enforced automatically, not retrofitted).

The composability principle — treating a platform as independent, API-first layers that strengthen each other — was something I first explored in a government digital transformation project where composable architecture survived a black swan event (COVID). The IDP scaled that principle from government services to developer services.

What We Built

The Intelligent Developer Platform was a 9-layer, composable architecture:

- Onboarding — AI-guided, customizable. Provisions environments, contextualizes tools, walks developers through organizational standards. Designed to reduce onboarding from weeks to hours.

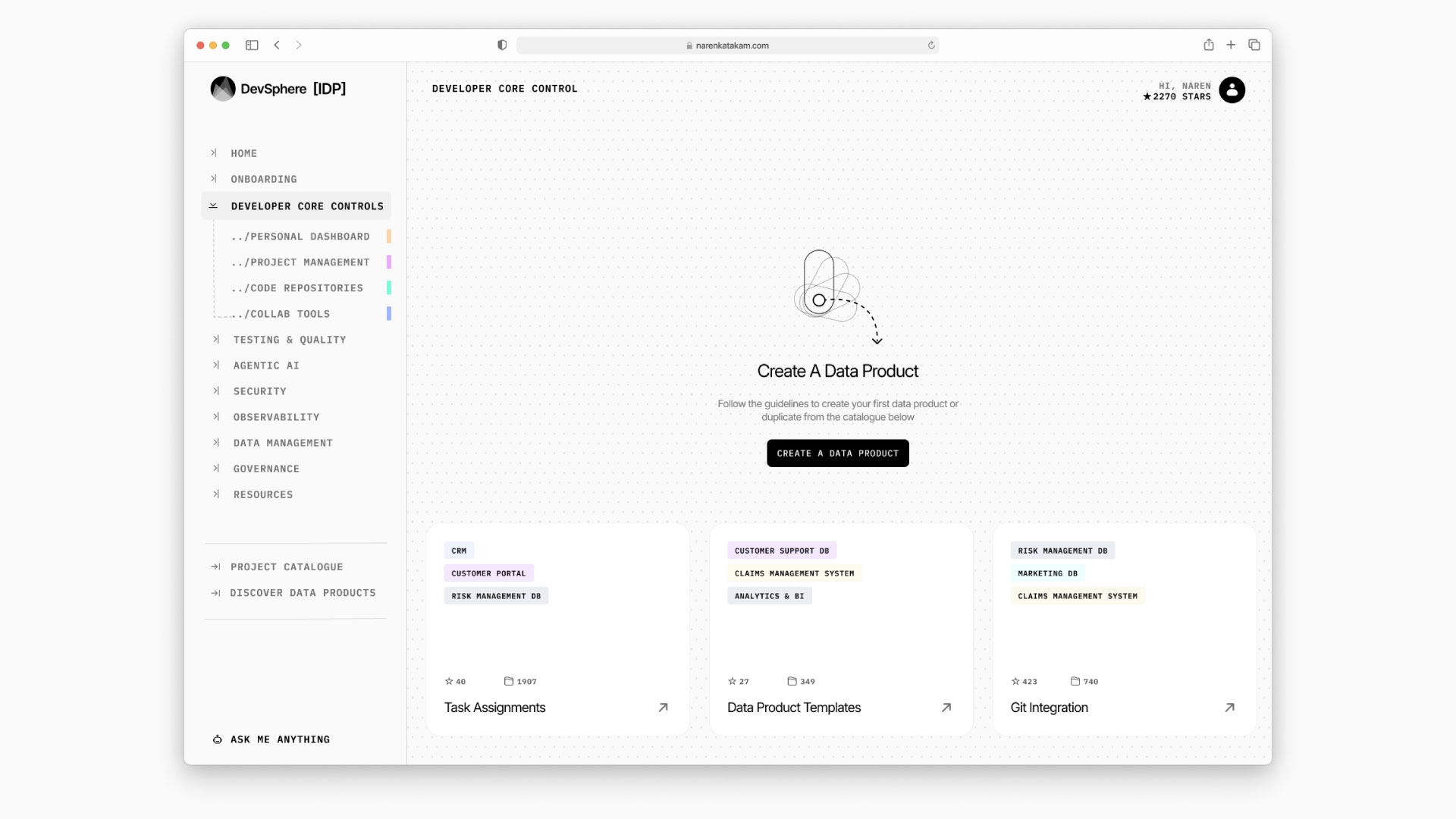

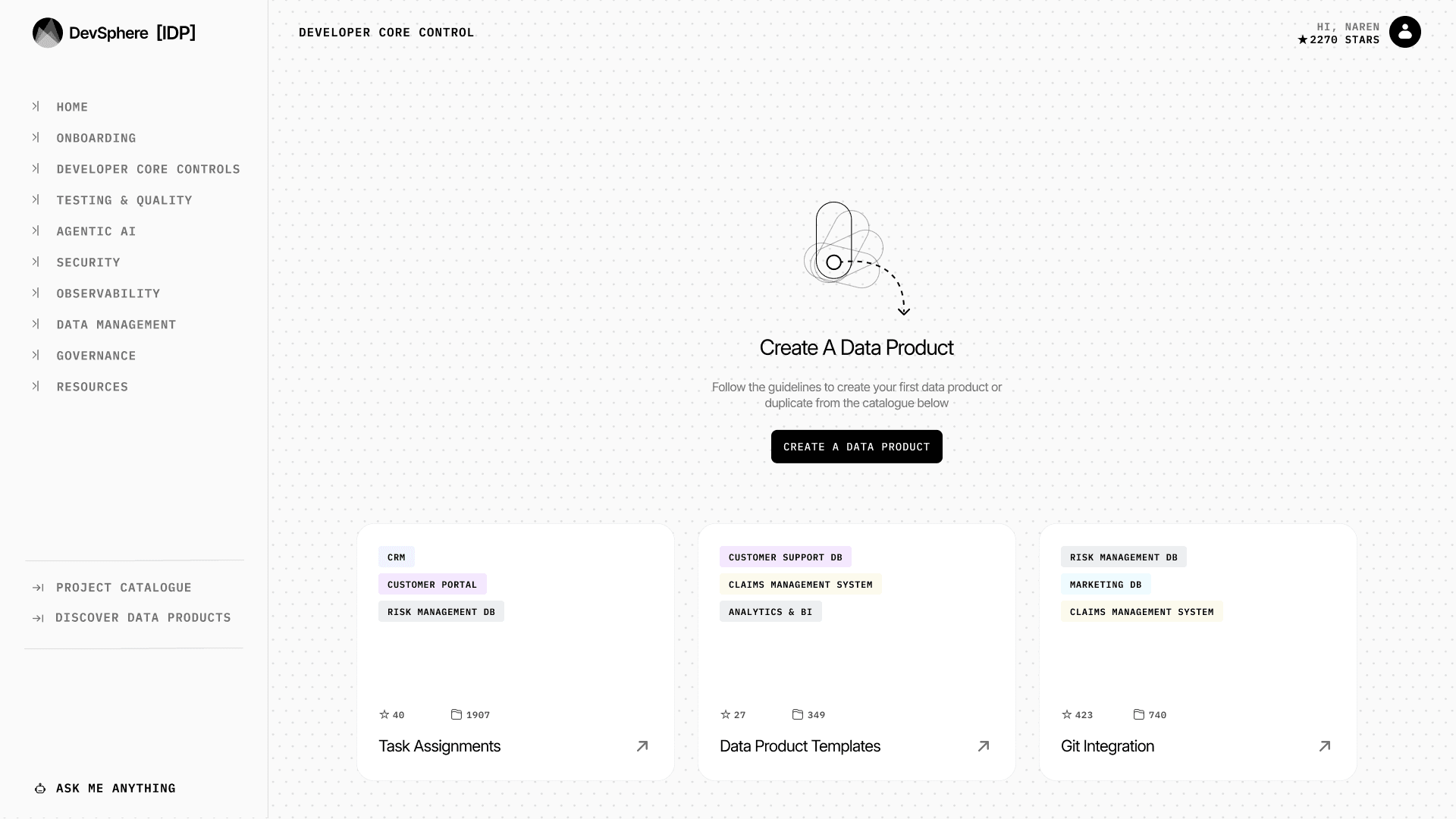

- Developer Core Control Plane — The mission control. Unified interface for projects, repositories, collaboration, and progress monitoring. Personalized dashboards that adapt to each developer’s context.

- AI Agents Plane — Specialized AI agents for code review (assessing logical consistency, architecture patterns, not just linting), security scanning (cross-referencing known vulnerabilities against internal policies), and compliance checks (automated enforcement of coding and data handling standards). Embedded into IDEs and CI/CD workflows.

- Self-Serve Control Plane — Environment provisioning, CI/CD pipeline setup, deployment orchestration. Developers ship without waiting on DevOps, with guardrails ensuring consistency.

- Testing & Quality Control Plane — AI-powered test case generation, regression detection, synthetic testing. Instant feedback loops instead of overnight test runs.

- Observability & Analytics Plane — Real-time dashboards for developer velocity, platform health, code quality trends, incident response. Actionable data for workflow optimization and team rebalancing.

- Data Products Plane — Modular, reusable datasets — curated, versioned, permissioned. Developers build data-dependent features without duplication or misalignment.

- Knowledge & Resources Plane — AI-enhanced knowledge hub. API specs, internal docs, architectural blueprints, reusable templates. Context-aware retrieval so developers find what they need, not what was last indexed.

- Security & Compliance Plane — Policy enforcement, audit trails, identity management, risk scoring across the full development lifecycle. Proactive, not reactive.

Every layer was API-first, extensible, and designed for continuous evolution. The critical design decision was human-in-the-loop throughout — AI augments the developer, it doesn’t replace decision-making. The 9 layers aren’t a stack you deploy once. They’re a system that compounds: each layer makes the others more valuable.

Results

- 33% reduction in time spent on non-core development tasks (manual reviews, provisioning, documentation)

- Up to 50% faster onboarding for new developers and cross-functional team members

- 35%+ reduction in time-to-deploy through CI/CD pipeline optimization

- Significant reduction in error rates via AI-augmented code reviews and test automation

- First clear visibility into developer productivity — enabling data-informed decisions on hiring, investment, and team allocation

A note on honesty: as an external strategist, I designed the platform architecture and the measurement framework — the metrics above are projections from prototyping and internal testing. The organization owns execution. My contribution was the architectural decision that unlocked everything downstream. These were early-stage benchmarks, and the platform was in deployment phases at the time of my involvement.

What We Deliberately Didn’t Build

The key design decisions were subtractions, not additions. What we said NO to shaped the platform more than what we said yes to.

We didn’t build a monolithic platform. The entire point was composability. A unified platform that required full adoption would have repeated the same mistake the fragmented tools already made — just with a shinier UI and a single vendor.

We didn’t try to replace developer tools. Rick Rubin captures this principle in The Creative Act: the best creative environments don’t impose a new process — they remove friction from the existing one. The platform augmented existing workflows, it didn’t ask developers to abandon them. Your IDE stays. Your terminal stays. The platform works through them, not instead of them.

We didn’t automate decisions. Human-in-the-loop was a non-negotiable. In a regulated industry, AI proposes and the developer decides. AI agents for code review flagged issues and suggested fixes — they never merged code autonomously. This wasn’t a limitation. It was a design principle.

We didn’t build for every developer at once. We started with the workflows where time leaked most — environment provisioning, code review, compliance checks — and expanded from there. Trying to solve everything simultaneously is how platform initiatives die.

What I’d Do Differently Today

This was October 2024. AI has moved fast since then — as Dwarkesh Patel documents in The Scaling Era, we’re in a period where AI capability compounds faster than organizations can absorb it. The AI Agents Plane was designed around specialized, task-specific agents — code review agent, security scanning agent, compliance agent. Today, I’d design for agentic AI from the start. Not separate agents for separate tasks, but orchestrated agents that can plan, reason across layers, and execute multi-step workflows autonomously.

Concretely: instead of an AI agent that reviews code and a separate one that checks compliance, I’d design a single agentic system that understands “this PR touches a regulated data pipeline” and autonomously runs the right reviews, compliance checks, and security scans — then surfaces only what needs human attention. Tools like Claude and Cursor have shifted what’s possible in developer tooling — what I wrote about in The Three Waves of AI as the shift from technology-centric to usability-centric AI. The platform architecture (the 9 layers) still holds. The AI layer within it would be fundamentally more autonomous.

Key Takeaway

Developer platforms are products. Developer experience is product design. The 9-layer architecture worked because we treated “how developers spend their time” as a design problem, human-centricity at the core. But the model has a natural boundary: it works for organizations with 300+ engineers where the coordination cost justifies dedicated platform engineering infrastructure. For a 15-person startup, it would be absurd over-engineering. Knowing when NOT to use a framework — that’s as important as knowing when to apply one.

FAQ

What is an Internal Developer Platform (IDP)?

An IDP is a self-service layer that sits between developers and the underlying infrastructure. It standardizes and automates common development workflows — environment provisioning, CI/CD, testing, observability — so developers focus on building, not configuring. The AI-powered version adds intelligent agents that automate cognitive tasks like code review, security scanning, and compliance enforcement.

Why 9 layers instead of a simpler architecture?

Because developer experience isn’t one problem — it’s nine interconnected problems. Onboarding is different from observability is different from compliance. Collapsing them into fewer layers creates the same monolithic mess we were trying to escape. Each layer has a clear responsibility, clear APIs, and can evolve independently. The complexity lives in the architecture so it doesn’t have to live in the developer’s daily experience.

How does AI-first architecture differ from adding AI features to existing tools?

AI-first means every capability is designed assuming AI is available. It’s not “here’s a code review tool, and oh we added an AI button.” It’s “the code review system is built on language models that understand your codebase, your team’s patterns, and your compliance requirements from day one.” The difference is foundational vs. bolted-on. Bolted-on AI helps incrementally. AI-first architecture enables non-linear productivity gains because every layer can reason, learn, and adapt.