March 16, 2026

What My Face Looks Like to a Machine

A photograph is a lie by omission.

It shows what a human eye might notice in passing — texture, warmth, the slight asymmetry that makes a face feel familiar. What it doesn’t show is what a machine actually sees: a 7-bit intensity value at (x=412, y=203), then another at (x=413, y=203), repeated 307,200 times.

When I rebuilt my personal site last week, I wanted the hero section to make this dichotomy of machines and humans play out on my photograph.

Brief to Self

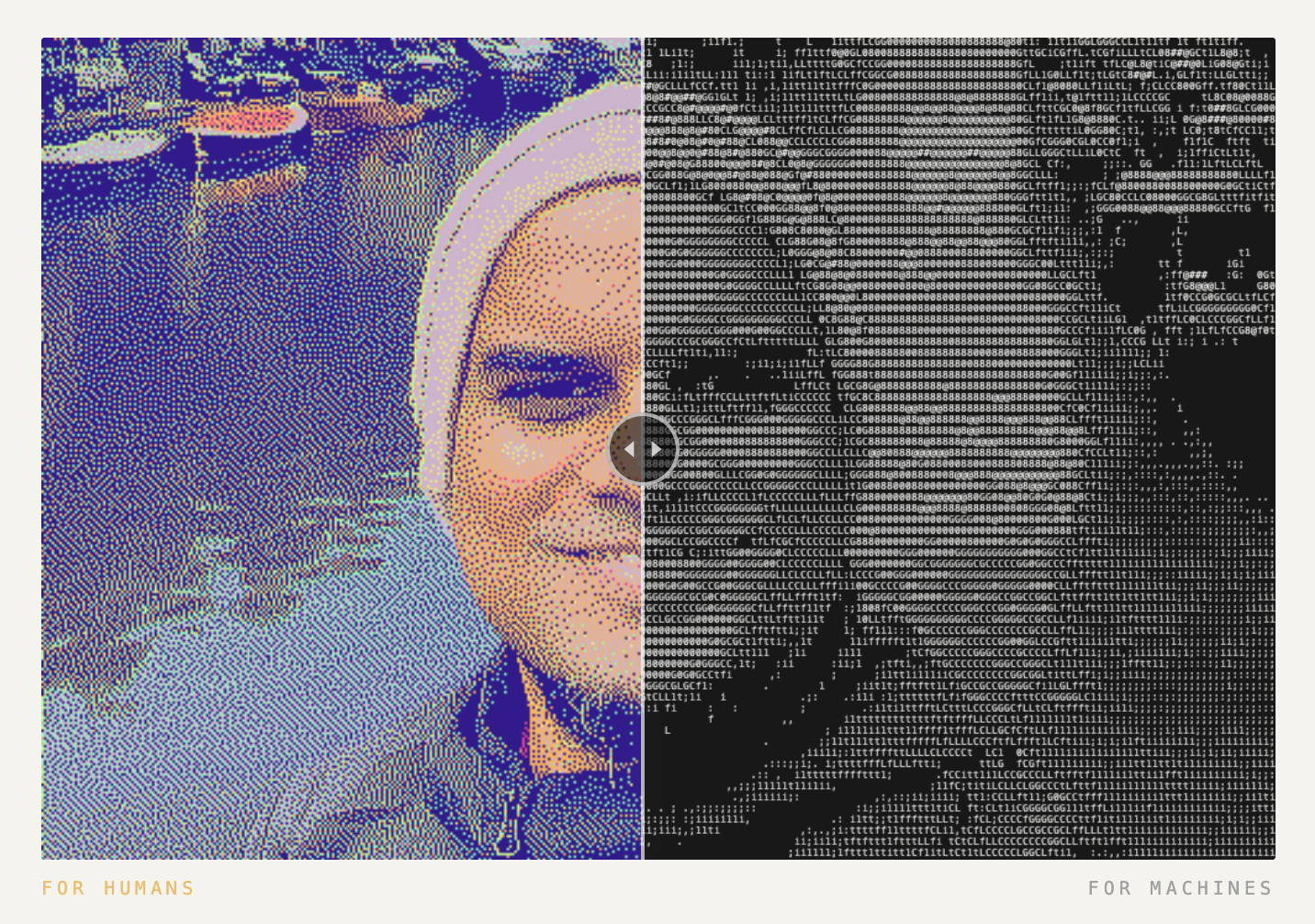

One image. Two interpretations. A slider between them.

Left: a dithered color photograph. Rich, warm, unmistakably human, resemblance to a Polaroid pic.

Right: the same face, remapped to ASCII characters in black and white. Resemblance of what a language model “sees.”

The label underneath: “for humans” on the left. “for machines” on the right.

Simple enough to describe in two sentences. Enough to build it wrong four times.

> [IMAGE: Side-by-side — “for humans” panel vs “for machines” panel, with labels visible. Full width.]

What Actually Happened

Version one took forty minutes. Technically functional. Aesthetically horrifying — the ASCII side looked like a 2003 command-line novelty project. Dense character fill, no breathing room, barely able to recognize facial contours.

I knew it was wrong immediately.

The problem wasn’t the implementation. It was the character ramp.

Most ASCII art algorithms use a ramp optimized for information density — every pixel mapped, every brightness level represented. The result is maximal and ugly. More signal than a human eye can process.

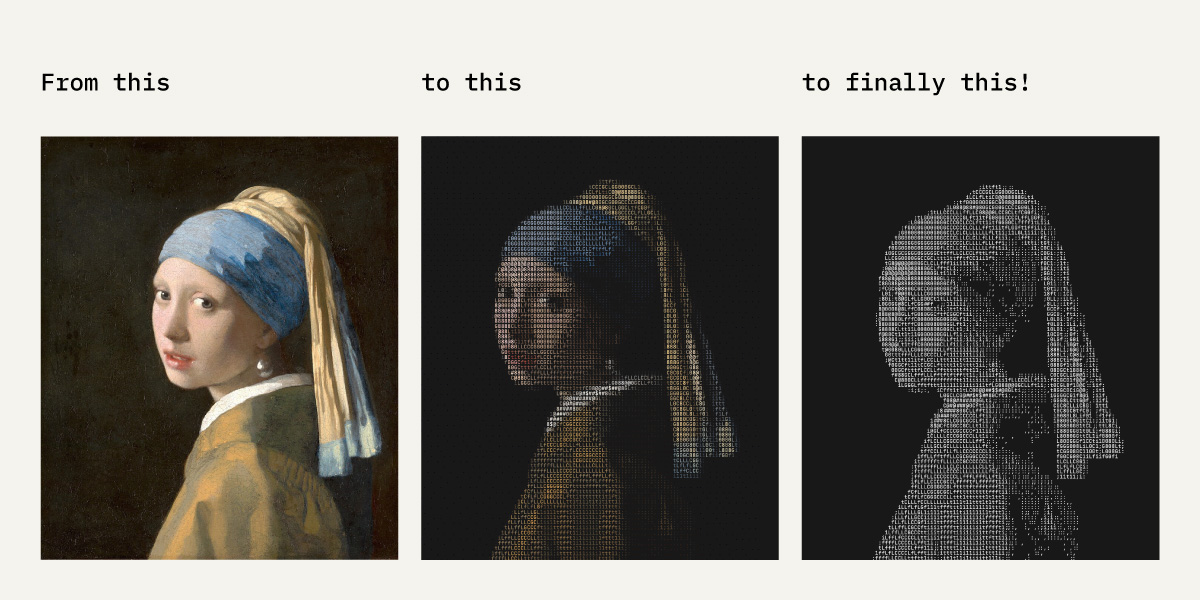

What I wanted was sparse. Characters only where they earn their position. Dark areas staying empty. Highlights getting one precise character. Claude and I tried it on a Vermeer painting first — trying to practice and learn so that we can implement on a real photograph.

This isn’t a technical constraint. It’s a taste constraint. And taste constraints are the hardest to articulate to an AI. Taste is an intriguing topic — Anu Atluru argues that taste is eating Silicon Valley. I think she’s right, and I’m exploring why in an upcoming post.

The AI Partnership, Honestly

Claude Code built every version of this slider. Each one in under 15 minutes with multiple prompts of feedback and iteration.

What I brought: the direction. The rejection. The vocabulary for what was wrong.

Version 2, I said: “Too dense. Dark areas need to breathe.”

Version 3, I said: ”Add a dark floor threshold — anything below 28% brightness renders as empty space, not a character.” This was the insight. It came from working on Vermeer paintings for twenty minutes and asking myself what made them feel uncrowded.

Version 4, I said: ”The face looks compressed. 7% vertical elongation.” This came from noticing that ASCII character cells are taller than wide, and without correction, faces look squashed. Interestingly went through the same iteration with Vermeer’s version as well.

None of these were planned. They were things I noticed, named, and handed back.

The AI writes code faster than I can type the function signature. I direct what the code should *feel* like. Neither of us gets there alone.

What I Deliberately Didn’t Build

An early idea was to pre-render the ASCII server-side and serve a static image. Faster. Simpler. More predictable.

I killed it.

If the ASCII is client-side — generated live from the photo on every page load — it stays synchronized with the source image automatically. Change the photo, the ASCII updates. No pipeline. No generated file to version.

There’s a deeper reason. The ASCII is being generated by the browser, in real time, from raw pixel data. That’s technically accurate to how machines process images. A pre-rendered version would break the metaphor.

What Shipped

A two-panel hero with a draggable slider. Left: warm, human, colour. Right: sparse, monochrome, Vermeer-style ASCII.

Try the slider yourself → here. When you drag toward the machine side, the face doesn’t disappear — it reinterprets. You’re not watching quality degrade. You’re watching a different grammar emerge.

Machines don’t see less than humans. They see differently.

What Compounds

This wasn’t just a hero section choice. It’s a conceptual frame for the whole site.

Every project I’ve built sits at the same boundary: what does a human need versus what does a system need? The recommendation engine. The drug discovery digital twin. The invisible banking layer. Each one is a negotiation between two different grammars of meaning.

The slider makes that visible in two seconds.

If the rest of this build log does its job, you’ll recognize this pattern — the human/machine negotiation, the taste constraint, the deliberate omission — in everything I ship.

What would you build differently if machines were half your audience?

Next week: the particle physics layer in three.js for each project card and project page.