March 27, 2026

How AI Learns You — Part 1: The Personalization Paradox

the most personal thing about how you drive isn’t your playlist.

It isn’t your preferred route, or the temperature you set the cabin to, or whether you have a bumper sticker that says something about your dog.

It’s the half-second before you brake.

That micro-pause — your instinct at a four-way stop, the particular rhythm of how you read a merge, the unspoken negotiation you conduct with every other car on the road — that is you. Your physics. The invisible signature of your attention, your risk tolerance, y

our relationship with time and space and consequence.

And somewhere in a research lab right now, an AI is learning exactly that.

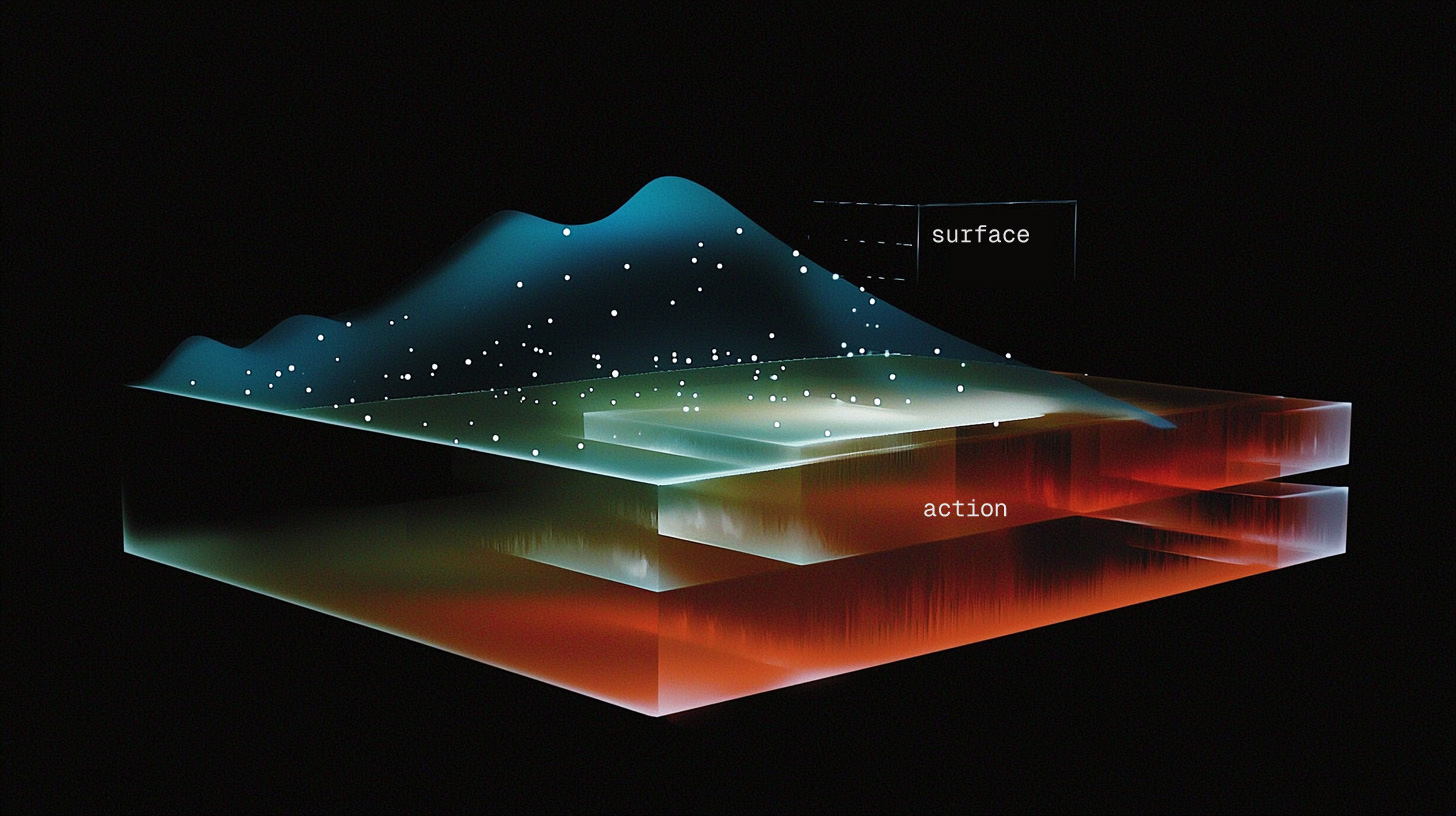

A new paper from autonomous driving researchers describes something called preference alignment at the action layer — training models not just to recognize traffic patterns, but to internalize individual behavioral DNA. How you accelerate. How you yield. The micro-decisions that are so habitual they feel like breathing. Not what you say you prefer. What you actually do, at 80 kilometers an hour, when the situation doesn’t give you time to think.

This is remarkable work. And also, quietly, an indictment of almost everything else being built in AI right now.

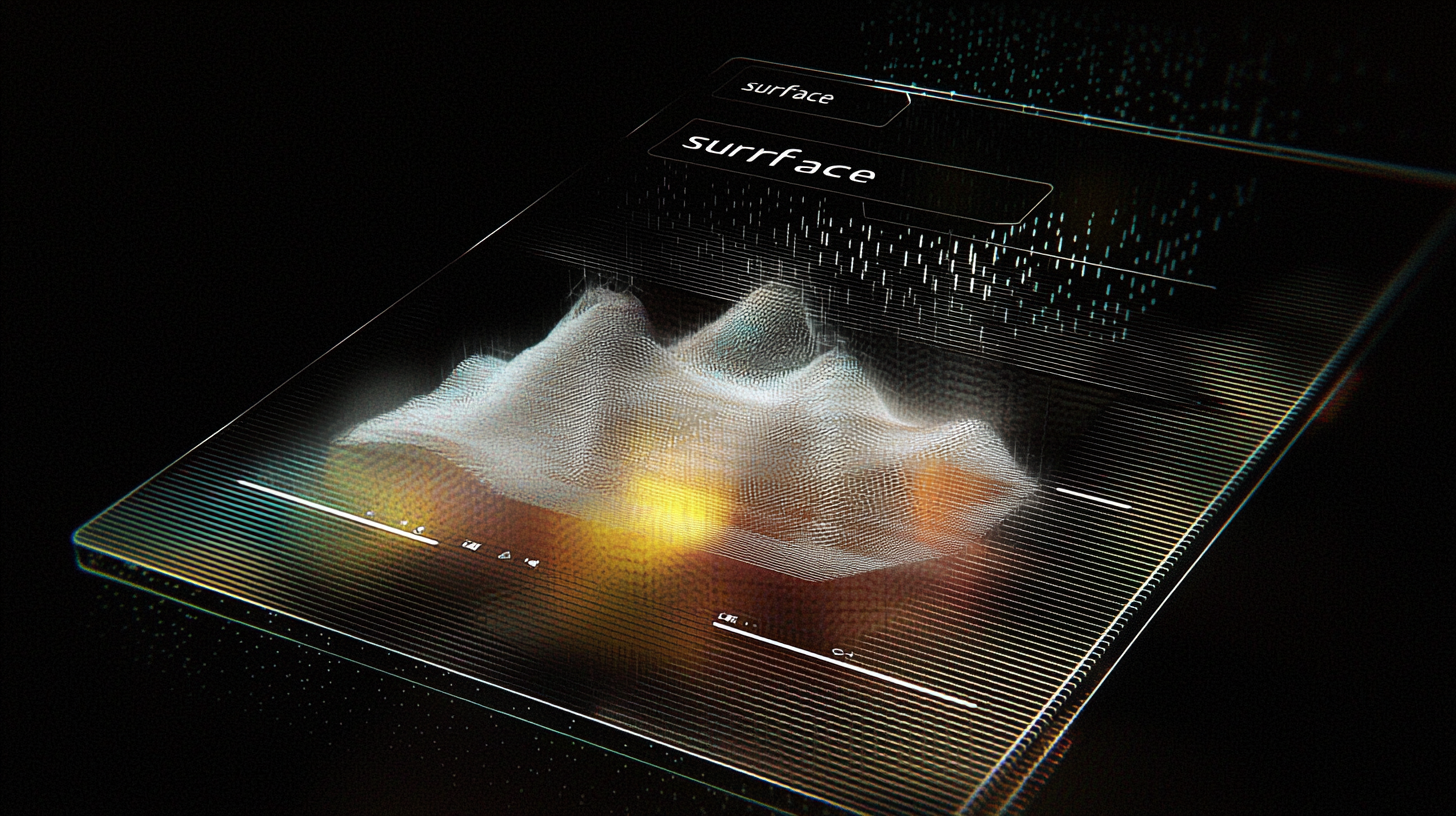

The wrong layer

Here’s what most AI products are doing while autonomous driving researchers are solving personalization at the deepest possible level:

They’re asking you to go through an onboarding flow (fill a form).

Pick your interests. Select your topics. Rate your expertise from one to five. Tell us what you want to see more of. And then, crucially, they treat that self-report as truth. Forever.

That is surface-layer personalization. It is the equivalent of asking you to describe your driving style in a form. “I consider myself a cautious driver. I prefer to maintain a safe following distance.” And then the car doing exactly that, regardless of whether you’re late to office or relaxed on a Sunday morning grocery drive or shaken from something that happened an hour ago.

The problem is that people are not very good at knowing what they want (we run on emotions). They’re worse at describing it accurately. But the conditions, they change – constantly – in ways no onboarding survey will ever capture – The gap.

The autonomous driving research bypasses this entirely. The model doesn’t ask how you drive. It watches. It learns the gap between your stated preference and your revealed behavior. It models the real thing – embodied, habitual, dynamic, you – not the version of you that filled out a form on a Tuesday afternoon when you were feeling optimistic about self-improvement.

The ignored deeper gap

But here’s what the paper points toward that nobody in the product world seems to be focusing on right now.

Action-layer personalization is still personalization of habits. It learns what you tend to do. Which is genuinely powerful. But habits are downstream of something deeper: values.

Why do you leave that particular gap before braking? Maybe because you’re cautious by nature. Maybe because you had a bad accident once and your nervous system remembers even when your mind doesn’t. Maybe because you’re distracted today and your reaction time has slowed in a way you haven’t consciously registered. Maybe all three at once.

Same action. Multiple interpretations. Very different implications for what you actually need.

The difference between an AI that learns your habits and an AI that learns your values is the difference between a system that predicts you and a system that understands you.

To me, this is the real gap. Not surface-layer vs. action-layer. Action-layer vs. meaning.

We are building AI systems that get very good at predicting what you’ll do next, while remaining almost entirely blind to why — to the values, the history, the accumulated context that makes your behavior make sense.

The product opportunity sitting unclaimed

I work in product. And when I look at what’s being built in the AI space right now, I see a lot of very sophisticated prediction and very little genuine understanding.

The systems that know you best — the recommendation engines, the feed curators, the suggestion machines — they know your patterns. They do not know your mind. They have never once seriously tried to model your values.

The autonomous driving research suggests this is solvable. Not easy. Not this year. But theoretically within reach. A model can be trained to internalize not just behavior, but the behavioral signature that emerges from a particular kind of person shaped by a particular set of values and experiences.

What does this look like outside the car?

An AI writing assistant that learns not what topics you write about, but why you write — what you’re trying to say in the world, what you refuse to compromise on, what makes you rewrite a sentence four times even when the first version was fine.

An AI advisor that models not your stated priorities but your revealed ones — where you actually spend your time and attention when nobody is watching and no one is keeping score.

An AI colleague that learns your decision-making instinct. How you think through ambiguity. What you trust. What you won’t cross, even when crossing it would be easier.

That’s the product IMHO, this research is pointing toward. We haven’t built it yet. But we might.

What it would actually take

It would require, first, that we stop treating personalization as an onboarding problem and start treating it as a continuous learning problem.

Not: tell us who you are when you sign up.

But: we will figure out who you are from how you actually behave, and we will update that understanding every single day.

It would require memory — not just storage, but living memory that learns and revises itself. More on that in Part 2.

It would require a genuine humility — the recognition that what you say you want and what you actually want are often meaningfully, importantly different. And that the job of a well-designed AI system is to close that gap without making you feel surveilled or flattened into a profile.

And it would require product teams willing to move the personalization layer much deeper than any team has dared to go so far.

The autonomous driving researchers went there because they had no choice — a self driving car that doesn’t understand your values well enough to make life-and-death decisions at speed is a car no sane person should step into.

The rest of AI has no such forcing function. So we’ve settled for the onboarding survey. We found the surface layer sufficient and we shipped.

I think about the half-second before we brake, a lot.

Not because autonomous driving is the most interesting application of this research — though it genuinely is extraordinary. But because that half-second contains everything.

It is the compressed expression of who you’ve become – your risk tolerance, your trust in other people, your relationship with time and consequence and the unexpected.

An AI that can learn that half-second — not just observe it, but understand what it means — is an AI that has crossed a threshold we haven’t named yet.

We’re getting there. Faster than most people realize. Slower than we need.

Next: Part 2 — The Knowledge Base That Forgets Is Just an Expensive Filing Cabinet

References:

-

Zeng, J., et al. “Drive My Way: Preference Alignment of Vision-Language-Action Model for Personalized Driving.” ArXiv preprint, 2025. Search arxiv.org by title.

-

Ouyang, L., et al. “Training language models to follow instructions with human feedback.” NeurIPS 2022. arxiv.org/abs/2203.02155 — foundational context on preference alignment.

If this sparked something:

If you’re not subscribed yet — this is where I think out loud about product, AI, and the way intelligent systems are being built. And occasionally, built wrong. Free, always. Hit subscribe and Part 2 lands in your inbox when it drops.

If this made you think of someone — a PM, an engineer, a founder building anything with AI — forward it. One good forward beats a hundred passive reads.

And if you want shorter takes between essays,

I’m on X at @narenkatakam. Same brain, faster loops.

More of what I work on at narenkatakam.com.